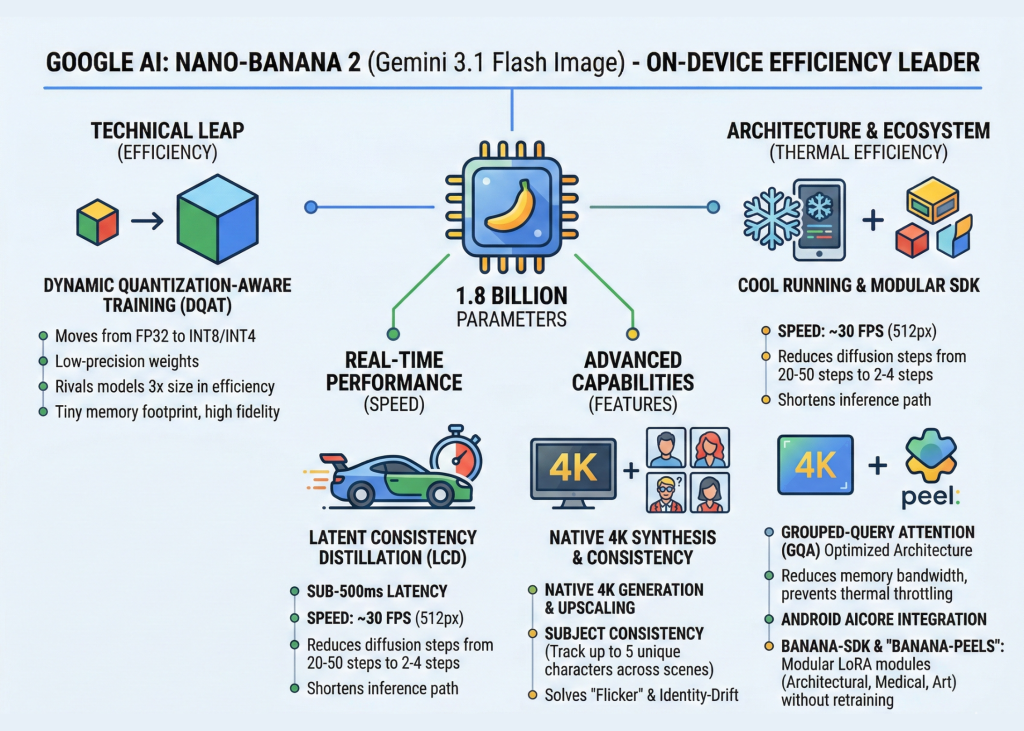

Google has officially entered the next phase of the artificial intelligence arms race with the release of Nano-Banana 2, a highly optimized model technically designated as Gemini 3.1 Flash Image. This announcement represents a strategic pivot for the technology giant, moving away from the "bigger is better" philosophy of massive data centers toward high-fidelity, sub-second image synthesis that resides entirely on consumer hardware. As the industry shifts its focus toward edge computing, Google’s latest offering aims to provide the speed and privacy of local processing without the traditional compromises in output quality or resolution.

The release of Nano-Banana 2 comes at a time when the mobile industry is hungry for integrated AI features that do not rely on expensive cloud subscriptions or constant internet connectivity. By shrinking the computational requirements while maintaining high-end generative capabilities, Google is positioning itself to lead the burgeoning "Small Language Model" (SLM) and specialized image synthesis markets. This development follows years of research into model compression and efficient architecture, marking a significant milestone in the Gemini roadmap.

The Evolution of Google’s On-Device Strategy

To understand the significance of Nano-Banana 2, one must look at the trajectory of Google’s AI development over the past twenty-four months. The original Nano-Banana was introduced as a proof-of-concept, demonstrating that mobile devices could handle basic reasoning and low-resolution image tasks. However, that first iteration was often criticized for its "hallucinations" and the significant latency that made it feel more like a novelty than a productivity tool.

The transition to Gemini 3.1 Flash Image represents a complete architectural overhaul. While the first version focused on proving that mobile hardware could run these models, Version 2 focuses on making them indistinguishable from their cloud-based counterparts. Built on a 1.8 billion parameter backbone, Nano-Banana 2 is designed to rival models three times its size in terms of efficiency and visual accuracy. This leap is the result of a concentrated effort by the Google AI team to optimize the relationship between parameter count and functional utility.

Technical Innovation: Efficiency Over Scale

The core of the Nano-Banana 2 breakthrough lies in its utilization of Dynamic Quantization-Aware Training (DQAT). In traditional software engineering and AI development, quantization is a method used to reduce the memory footprint of a model by down-casting weights. Typically, weights are stored in FP32 (32-bit floating point), which requires significant memory and processing power. Quantizing these to INT8 or INT4 saves space but usually results in a "noisy" output, where images lose detail, texture, and color accuracy.

Google’s DQAT approach addresses this by integrating the quantization process into the training phase itself. By teaching the model to function within the constraints of lower precision from the start, Nano-Banana 2 maintains a high signal-to-noise ratio. This allows the 1.8B parameter model to produce images with the textural depth typically reserved for models with 10 billion parameters or more. For the end-user, this means high-end generative AI that fits within the limited RAM of a standard smartphone.

Real-Time Performance and the LCD Breakthrough

Latency has long been the "silent killer" of on-device AI adoption. If a user has to wait ten seconds for an image to generate, the workflow is interrupted. Nano-Banana 2 effectively eliminates this friction by achieving sub-500 millisecond latencies on mid-range mobile hardware. During live demonstrations, the model was shown generating roughly 30 frames per second at a resolution of 512px. This performance level effectively transitions the technology from "image generation" to "real-time synthesis," opening the door for augmented reality (AR) applications and live video filters.

This speed is primarily attributed to Latent Consistency Distillation (LCD). Standard diffusion models, such as those used by Midjourney or DALL-E, are computationally expensive because they require 20 to 50 iterative "denoising" steps to create a clear image from random noise. LCD allows Nano-Banana 2 to predict the final image in as few as two to four steps. By dramatically shortening the inference path, Google has bypassed the latency issues that previously made on-device generative AI feel sluggish and impractical for real-time use.

Advanced Features: 4K Native Generation and Subject Consistency

Beyond the raw speed, Nano-Banana 2 introduces two features that address long-standing pain points for both developers and casual users: high-resolution output and subject consistency.

- 4K Native Upscaling: Historically, on-device models were capped at low resolutions to prevent the device from crashing due to memory overloads. Nano-Banana 2 utilizes a tiled generation approach that allows it to produce 4K-native images. It generates the base image and then intelligently enhances specific sectors, resulting in professional-grade clarity on a mobile screen.

- Subject Consistency: One of the most difficult tasks in generative AI is keeping a character or object consistent across multiple generations. Nano-Banana 2 introduces a localized "Subject-Lock" mechanism. This ensures that if a user generates an image of a specific character in a park, and then asks for that same character in a city, the facial features and clothing remain consistent without needing to upload the image to a cloud-based fine-tuning server.

Architecture and Thermal Management with GQA

For systems engineers, the most impressive aspect of Nano-Banana 2 is not just its speed, but its thermal efficiency. Mobile devices are notorious for "throttling"—reducing the clock speed of the GPU or NPU (Neural Processing Unit) when the device gets too hot. Heavy AI tasks usually trigger this within minutes, leading to a sharp drop in performance.

Google mitigated this through the implementation of Grouped-Query Attention (GQA). In standard Transformer architectures, the attention mechanism, which allows the model to understand the relationship between different parts of a prompt or image, is a massive consumer of memory bandwidth. GQA optimizes this by sharing key and value heads across the architecture. This significantly reduces the amount of data movement required during inference. By lowering the data "traffic" within the chip, the model generates less heat, allowing it to run "cool" even during extended sessions of image generation.

The Developer Ecosystem: Banana-SDK and ‘Peels’

Google is not just releasing a model; it is releasing a complete ecosystem. By integrating Nano-Banana 2 directly into the Android AICore, Google is providing standardized APIs for on-device execution. This means developers do not need to build their own inference engines; they can simply call the model through the operating system.

The launch also introduced the Banana-SDK, which facilitates the use of "Banana-Peels." This is Google’s branding for specialized LoRA (Low-Rank Adaptation) modules. These modules allow developers to "snap on" specific fine-tuned weights for niche tasks without needing to retrain the entire 1.8B parameter model. For example, a medical app could use a "Peel" trained on anatomical diagrams, while an interior design app could use a "Peel" specialized in architectural rendering. This modularity ensures that the base model remains small while its applications remain nearly infinite.

Chronology of On-Device AI Development

The path to Nano-Banana 2 has been marked by several key industry milestones:

- Late 2023: Google announces Gemini 1.0, introducing the "Nano" size for the first time.

- Mid 2024: Competitors release early on-device models, but most require high-end flagship processors (e.g., Snapdragon 8 Gen 3) to function.

- Late 2024: Google begins testing Dynamic Quantization-Aware Training in beta environments.

- Early 2025: The official unveiling of Gemini 3.1 Flash Image (Nano-Banana 2), marking the first time sub-500ms latency is achieved on mid-range hardware.

Industry Reactions and Factual Analysis

Industry analysts suggest that Nano-Banana 2 is a direct challenge to Apple’s "Apple Intelligence" and Meta’s Llama-based mobile initiatives. By focusing on image synthesis rather than just text, Google is targeting the visual-heavy social media and creator economy.

"The move to local-first AI is inevitable for privacy and cost reasons," says a lead researcher in mobile computing. "Google’s ability to squeeze 4K generation into a 1.8 billion parameter model suggests that the software optimization is finally catching up to the hardware capabilities of modern NPUs."

The implications for user privacy are also significant. Because Nano-Banana 2 processes everything locally, sensitive data—such as personal photos used for subject consistency—never leaves the device. This "Privacy-by-Design" approach is expected to be a major selling point for enterprise clients and privacy-conscious consumers.

Broader Impact and Future Outlook

The release of Nano-Banana 2 signals a shift in the AI narrative. The era of "bigger is better" is being supplemented by an era of "faster and more efficient." As Google integrates this model into the broader Android ecosystem, we can expect a new wave of applications that utilize real-time image synthesis for everything from instant fashion previews to real-time translation of visual signs in AR.

The success of Nano-Banana 2 will likely force competitors to accelerate their own on-device roadmaps. For Google, the goal is clear: to make Gemini the invisible backbone of the mobile experience, providing high-fidelity AI that is always on, always fast, and always private. With the Banana-SDK and the modularity of "Peels," the company has provided the tools necessary for a decentralized AI future, where the power of a data center resides in the palm of a user’s hand.