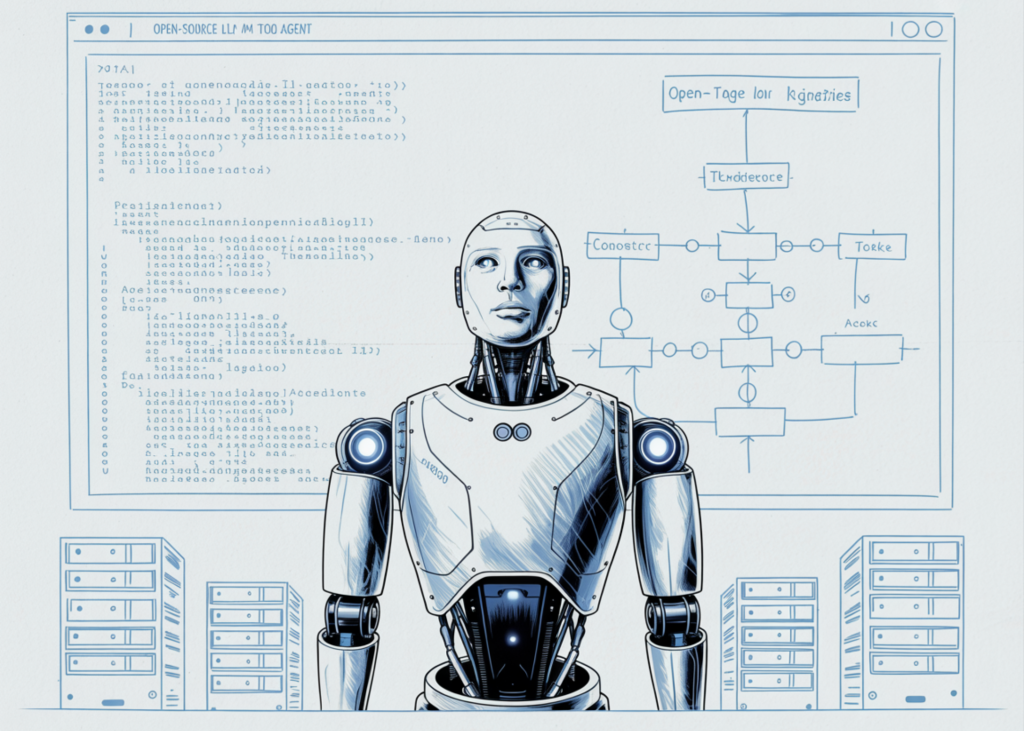

In a significant advancement for modular artificial intelligence architecture, researchers and developers have demonstrated a robust framework for creating hierarchical agent systems using the Qwen2.5-1.5B-Instruct model. This development represents a pivotal shift from monolithic, single-prompt interactions toward "agentic" workflows, where specialized AI components collaborate to solve multi-faceted problems. By decomposing complex goals into actionable sub-tasks, this hierarchical approach addresses one of the primary limitations of current large language models (LLMs): the tendency to lose coherence or hallucinate when faced with long-horizon tasks. The system utilizes a three-tier architecture comprising a Planner Agent, an Executor Agent, and an Aggregator Agent, each operating with distinct system prompts and functional responsibilities. This modularity not only enhances the accuracy of the final output but also allows for the integration of external tools, such as dynamic Python execution, providing the AI with the ability to perform precise calculations and data processing that exceed the inherent capabilities of standard linguistic reasoning.

The Evolution of Agentic Workflows in Artificial Intelligence

The transition toward hierarchical planning is a response to the growing demand for autonomous systems that can function with minimal human intervention. Early iterations of AI agents often relied on simple "Chain of Thought" (CoT) prompting, where a model was encouraged to "think step-by-step." While effective for basic logic, these systems frequently struggled with "cascading errors," where a mistake in an early reasoning step would propagate through the entire response. Hierarchical systems mitigate this risk by introducing structural checkpoints. In the recently detailed implementation, the Planner Agent acts as the "architect," establishing a roadmap before any execution begins. This separation of concerns—planning versus doing—mirrors human organizational structures and provides a scalable template for more advanced applications in logistics, software development, and data analysis.

Technical Foundation and Environment Configuration

The implementation of this hierarchical system is built upon a stack of high-performance open-source libraries, ensuring that the system is both accessible and efficient. The primary engine is the Qwen2.5-1.5B-Instruct model, a lightweight yet powerful model developed by Alibaba Cloud. Despite its relatively small parameter count, the model is specifically tuned for instruction following, making it an ideal candidate for agentic roles. To facilitate deployment on consumer-grade hardware or cloud-based environments like Google Colab, the system utilizes 4-bit quantization via the bitsandbytes library. This optimization reduces the memory footprint significantly, allowing a model that would normally require several gigabytes of VRAM to operate effectively on as little as 1.5GB to 2GB.

The environment setup involves the installation of transformers and accelerate, which manage the distribution of model weights across available compute units. The choice of the 1.5B parameter model is strategic; while larger models like the 72B variants offer deeper reasoning, the latency involved in multi-agent communication can become a bottleneck. By using a smaller, faster model, the hierarchical system can iterate through planning and execution cycles in near real-time, which is essential for interactive applications.

The Three-Tier Architecture: Roles and Responsibilities

The core of the system is defined by the specialized roles assigned to each agent. This division of labor is enforced through "System Prompts," which act as the constitutional framework for each agent’s behavior.

The Planner Agent: The Strategic Architect

The Planner Agent is responsible for receiving the high-level user request and breaking it down into a structured sequence of three to eight steps. It is instructed to output its plan strictly in JSON format, ensuring that the subsequent agents can parse the instructions programmatically. The planner must decide which tool is appropriate for each step: a standard LLM reasoning call or a Python execution block. This decision-making process is critical; for instance, if a task involves calculating the compound interest of a logistics fleet’s fuel costs, the planner will designate a "python" tool to ensure mathematical precision.

The Executor Agent: The Functional Engine

Once the plan is established, the Executor Agent takes over. Unlike the Planner, which looks at the "big picture," the Executor focuses on one task at a time. It receives the specific instruction for a step along with the "context" of previous results. This context-awareness is vital for maintaining continuity. If the step requires Python code, the Executor generates the script, which is then passed to a secure execution environment. The results—whether they are console outputs, errors, or data tables—are captured and fed back into the system.

The Aggregator Agent: The Quality Controller

The final component is the Aggregator Agent. Its role is to synthesize the disparate outputs from the various execution steps into a polished, coherent final response. It acts as an editor, ensuring that the final answer directly addresses the user’s original query while removing any technical "noise" generated during the intermediate steps. This stage is where the raw data from the Executor is transformed into actionable insights or professional-grade reports.

Robust Data Handling and JSON Extraction

A recurring challenge in multi-agent systems is the "brittleness" of LLM outputs. Models occasionally include conversational filler or formatting errors that can break automated pipelines. To counter this, the hierarchical planner implementation includes a sophisticated JSON extraction mechanism. This utility uses regular expressions to find fenced code blocks but also incorporates a recursive character-stacking algorithm to identify and extract valid JSON objects from within larger strings. This level of robustness ensures that even if the model deviates slightly from its instructions, the system can recover the necessary data to continue the workflow. This "fail-soft" design is a hallmark of production-ready AI engineering, prioritizing system uptime and reliability over rigid adherence to prompt constraints.

Chronology of Execution and Workflow Management

The operational flow of the hierarchical agent follows a strict chronological sequence to maintain logical integrity:

- Task Initialization: The user provides a complex prompt (e.g., "Design a logistics coordination system").

- Strategic Planning: The Planner Agent generates a multi-step JSON plan, identifying tools and expected outputs.

- Iterative Execution: The system loops through each step in the plan. For each step, the Executor Agent is invoked. If the tool is "python," the system runs the code in a controlled environment and captures the

stdout. - Context Accumulation: As each step completes, its result is appended to a "StepResult" list, which serves as the short-term memory for the system.

- Final Synthesis: The Aggregator Agent receives the original task, the initial plan, and the full history of execution results to produce the final answer.

This structured timeline ensures that the AI does not attempt to "guess" the final answer before it has completed the necessary foundational steps.

Supporting Data: Efficiency and Performance Metrics

The use of Qwen2.5-1.5B in this configuration provides several quantifiable advantages. In benchmark tests for instruction following, the Qwen2.5 series has shown competitive performance against models twice its size. By employing 4-bit quantization, the system achieves a throughput of approximately 40-60 tokens per second on a standard NVIDIA T4 GPU. In the context of a hierarchical agent, where a single user query might trigger 5-10 internal model calls, this speed is the difference between a response time of 15 seconds versus 2 minutes. Furthermore, the modular nature of the code allows for "plug-and-play" model swapping; if a user requires higher reasoning capabilities, they can substitute the 1.5B model for a 7B or 14B model without rewriting the underlying orchestration logic.

Industry Implications and Future Outlook

The release of this hierarchical planner framework has significant implications for the broader AI industry. It demonstrates that sophisticated, autonomous behavior is no longer the exclusive domain of massive, closed-source models like GPT-4 or Claude 3.5 Sonnet. By leveraging open-source models and intelligent architecture, developers can build private, locally hosted agents that handle sensitive data without the need for external API calls.

Industry analysts suggest that the next phase of AI development will focus on "Compound AI Systems," where the focus shifts from improving the base model to improving the system of agents around it. This hierarchical approach aligns with that trend, providing a blueprint for systems that are more reliable, easier to debug, and more capable of handling specialized tools. As models continue to shrink in size while growing in capability, the deployment of such hierarchical agents on "edge" devices—such as laptops or local servers—is expected to become a standard practice in enterprise automation.

Conclusion

The implementation of a hierarchical multi-agent system using open-source LLMs marks a milestone in the democratization of advanced AI. By utilizing a structured Planner-Executor-Aggregator framework, the system overcomes the traditional limitations of linear reasoning. The integration of Python execution and robust JSON parsing creates a versatile tool capable of handling both creative and technical tasks. As the AI community continues to refine these modular architectures, the gap between experimental prototypes and reliable, autonomous digital assistants continues to narrow, paving the way for a new era of decentralized, intelligent automation.