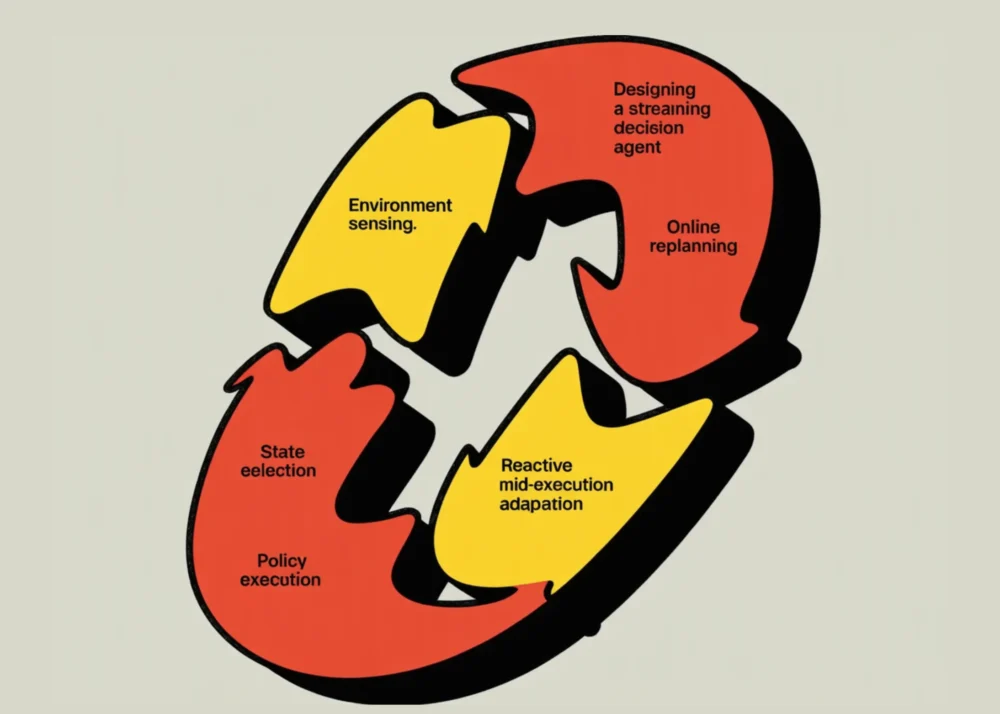

The landscape of artificial intelligence is undergoing a fundamental shift from static, prompt-based interactions toward "Agentic AI"—systems capable of independent reasoning, environmental interaction, and real-time adaptation. Recent developments in the field have highlighted the necessity of Streaming Decision Agents, which are designed to operate within non-stationary environments where obstacles move and objectives shift. Unlike traditional AI models that generate a complete plan before execution, these modern agents utilize a receding-horizon control loop, committing only to immediate actions while continuously re-evaluating their trajectory based on live telemetry. This approach addresses a critical vulnerability in autonomous systems: the tendency to follow "stale" trajectories that no longer reflect the physical or digital reality of the environment.

The Evolution of Reactive Autonomy

For decades, pathfinding in robotics and software agents relied heavily on static algorithms like A* or Dijkstra’s. While effective in controlled environments, these methods often fail in "online" scenarios where the world changes after the initial calculation is made. The introduction of the Streaming Decision Agent framework represents a significant leap forward in solving this problem. By integrating structured reasoning updates—streamed in real-time—the agent provides a transparent window into its "thought process," allowing human supervisors to monitor decision-making as it happens.

The architecture of such an agent is built upon four pillars: environmental perception, incremental reasoning, risk-aware decision-making, and structured event streaming. By employing tools like Pydantic for data validation, developers can ensure that every "thought" the agent emits follows a rigorous schema, reducing the risk of hallucination or malformed logic during high-stakes operations.

Technical Architecture and Environmental Simulation

The foundational testbed for these agents is often a Dynamic Grid World. In recent technical demonstrations, researchers have utilized an 18×10 grid characterized by a 0.18 obstacle ratio. This environment is intentionally designed to be hostile to static planning; obstacles spawn and disappear with specific probabilities (0.25 and 0.15, respectively), and the target objective "jitters" or moves every few cycles.

The world-transition logic is governed by a step-count mechanism. Every six steps, the environment undergoes a "shuffle," simulating the unpredictability of real-world scenarios such as pedestrian traffic in autonomous driving or fluctuating network conditions in automated cybersecurity response. To navigate this, the agent employs an online A planner. However, the innovation lies not in the A algorithm itself, but in how its output is managed. The agent utilizes a "horizon" of six moves. Even if a path to the goal requires 30 steps, the agent only commits to the first six, knowing that the environment will likely change before it reaches the seventh.

Chronology of a Streaming Decision Loop

The operational lifecycle of a Streaming Decision Agent follows a strict chronological sequence designed to maximize both efficiency and safety.

- Initialization and Observation: The agent begins by capturing a wall-clock timestamp and reading the initial state of the grid. It emits an "observe" event, creating a baseline for the human operator.

- Strategic Planning: The A* planner calculates the Manhattan distance to the target, considering all currently known obstacles. If a path is found, the agent prepares a sequence of actions (Up, Down, Left, Right).

- Risk Evaluation and Override: Before any physical or digital move is executed, the agent runs a local "risk gate." It evaluates the immediate neighbors of its next position. If the risk exceeds a predefined threshold (e.g., 0.85), the agent triggers an "override," choosing a safer alternative move or opting to "wait" (Stay) until the environment stabilizes.

- Action and Feedback: The agent executes the chosen action and immediately receives a "surprise" report from the environment. This report details whether the target moved or if new obstacles appeared.

- Receding Horizon Re-evaluation: If a "surprise" is detected, the agent invalidates its current plan and returns to step two, ensuring that it never acts on outdated information.

Data-Driven Decision Making and Safety Protocols

The effectiveness of the Streaming Decision Agent is measured through a variety of metrics, including the "override rate" and "replanning frequency." In a typical execution of 120 maximum steps, a successful agent might undergo 15 to 20 full replans. This high frequency of re-evaluation is not a sign of failure but a metric of "environmental sensitivity."

Safety is enforced through a lightweight risk model. The agent calculates risk based on two primary factors: proximity to obstacles and proximity to the grid edges. The formula 0.25 * near_obstacles + 0.15 * edge_proximity allows the agent to quantify the danger of a specific move. If a planned path takes the agent through a narrow corridor of moving obstacles, the risk gate will trigger an "alternative search depth," looking for a path that may be longer but is statistically safer.

The use of Pydantic for "StreamEvents" is a crucial safety feature. Each event contains a t (wall-clock time), kind (event type), step (counter), and a msg (human-readable summary). This structured payload ensures that the agent’s internal state is always loggable and auditable, a requirement that is increasingly becoming a legal necessity in industries like autonomous transport and healthcare AI.

Industry Implications and Expert Analysis

The shift toward streaming, incremental reasoning has profound implications for the AI industry. Industry analysts suggest that this architecture is the "missing link" between Large Language Models (LLMs) and physical robotics. While LLMs are excellent at high-level reasoning, they often lack the "proprioception" or environmental awareness required for real-time action. By wrapping a reasoning engine in a streaming decision loop, developers can create "System 2" thinkers—agents that slow down to evaluate risks when the environment becomes volatile.

"The goal is transparency," notes one senior AI researcher. "When an autonomous drone decides to veer off-course, we need to know within milliseconds whether it was avoiding a bird, responding to a gust of wind, or suffering a sensor failure. Streaming reasoning updates provide that ‘black box’ data in real-time, rather than after a crash."

Furthermore, the "receding-horizon" approach is being hailed as a solution to the computational "bottleneck" of AI. Rather than wasting GPU cycles calculating a 1,000-step path that will inevitably change, the agent focuses its "mental energy" on the immediate future. This makes the system significantly more scalable for edge computing devices with limited processing power.

Broader Impact on Human-AI Collaboration

The social impact of Streaming Decision Agents extends to human-AI collaboration. In complex environments like emergency response or financial trading, humans are often hesitant to trust autonomous systems because their logic is opaque. A streaming agent that outputs messages like "Plan updated: committing to next 6 moves" or "Risk avoidance override: planned move invalid" builds a "mental model" for the human supervisor.

This transparency allows for "interventional autonomy," where a human can see a planned trajectory on a dashboard and intervene only if they disagree with the agent’s risk assessment. This moves the human role from "active pilot" to "strategic overseer," a shift that is expected to increase productivity across automated sectors by up to 40% over the next decade.

Conclusion and Future Outlook

The development of the Streaming Decision Agent, as demonstrated in the dynamic grid world tutorial, provides a blueprint for more resilient AI. By combining the heuristic power of A* with the safety-first approach of receding-horizon control and structured streaming, researchers have created a system that "thinks while it acts."

Looking ahead, the next frontier for these agents involves "multi-agent coordination," where dozens of streaming agents must negotiate their paths in the same environment without a central controller. As these systems move from 18×10 grids into the complexities of the real world, the principles of incremental reasoning and transparent communication will remain the cornerstones of safe and reliable autonomy. The ability to adapt mid-run, rather than unthinkingly following a stale trajectory, is what will ultimately define the success of AI in the physical world.