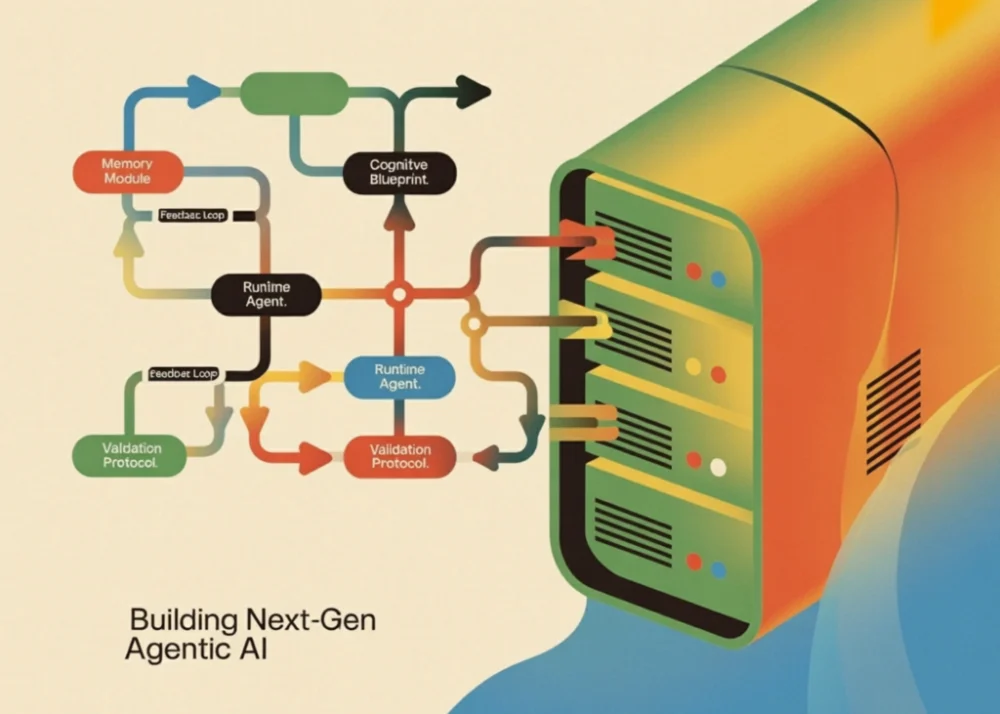

The landscape of artificial intelligence is undergoing a fundamental shift from simple conversational interfaces to complex, autonomous agentic systems capable of independent reasoning and multi-step execution. Central to this transition is the development of the "Cognitive Blueprint," a structured architectural framework that defines an agent’s identity, goals, planning strategies, and memory constraints. By separating an agent’s personality and capabilities—defined in portable YAML blueprints—from its underlying runtime execution engine, developers are now able to create highly specialized AI entities that can plan, execute, validate, and self-correct with unprecedented precision. This modular approach, exemplified by the emerging Auton framework, addresses long-standing challenges in AI reliability, auditability, and task-specific optimization.

The Architecture of Cognitive Blueprints

The core of the new agentic paradigm lies in the "Cognitive Blueprint," a comprehensive set of parameters that govern how a large language model (LLM) interacts with its environment. Unlike traditional prompting, which relies on a single string of instructions, a cognitive blueprint uses strongly typed models to define the boundaries of AI behavior. These blueprints are composed of several critical modules: identity, memory, planning, and validation.

The identity module establishes the agent’s name, versioning, and specific persona, ensuring that the AI remains consistent in its professional tone and scope. For instance, a "ResearchBot" and a "DataAnalystBot" may utilize the same underlying LLM, but their blueprints dictate vastly different approaches to problem-solving. While the ResearchBot might prioritize citing methods and showing step-by-step reasoning, the DataAnalystBot focuses on identifying trends, anomalies, and descriptive statistics within numerical datasets.

Memory management within these blueprints has also evolved. Rather than maintaining a raw, ever-growing transcript of interactions, modern frameworks implement sophisticated memory managers. These systems categorize memory into short-term, episodic, and persistent types. When a conversation exceeds a predefined "window size," the runtime engine automatically compresses earlier interactions into a concise summary. This ensures that the agent retains the necessary context for long-term tasks without exceeding the token limits or computational constraints of the underlying model.

Structured Planning and Execution Strategies

One of the most significant advancements in this framework is the formalization of planning strategies. The Auton-style runtime engine allows agents to choose between sequential, hierarchical, or reactive planning. In a sequential strategy, the agent breaks a user task into a linear series of steps, where each step’s output informs the next. A hierarchical strategy, conversely, allows the agent to create sub-tasks and delegate them, which is particularly useful for complex data analysis involving multiple statistical operations.

The execution phase is decoupled from the planning phase to ensure rigorous oversight. Once a plan is generated in a structured format—typically JSON—it is handed over to an "Executor." This component is responsible for invoking specific tools from a "Tool Registry." These tools are not merely prompts but are functional Python methods that perform deterministic tasks such as mathematical calculations, unit conversions, or date arithmetic. By utilizing a tool registry, the agent avoids the "hallucination" common in LLMs when performing arithmetic or factual lookups, instead relying on verified code to produce accurate results.

The Validation Loop: Ensuring Accuracy and Safety

A defining feature of modern autonomous frameworks is the inclusion of a dedicated "Validator" module. After the executor completes its tasks and synthesizes a final answer, the validator audits the response against the constraints defined in the cognitive blueprint. This step is crucial for enterprise applications where accuracy and adherence to safety protocols are non-negotiable.

The validator checks for several criteria:

- Response Length: Ensuring the answer is substantive enough to address the query.

- Reasoning Requirements: Verifying that the agent has provided a "chain of thought" or visible logic for its conclusions.

- Forbidden Phrases: Filtering out undesirable language or "I don’t know" responses when a tool-based solution is available.

- On-Topic Verification: Using a secondary LLM-based check to ensure the final output remains aligned with the original user task.

If a response fails validation, the runtime engine initiates a "Retry Loop." The agent is informed of the specific issues found in its previous attempt and is tasked with generating a new, improved plan. This self-correcting mechanism mimics human iterative problem-solving and significantly raises the success rate of complex autonomous tasks.

Chronology of Agentic AI Development

The journey toward cognitive blueprints and modular runtimes has been marked by several key milestones in the AI research community:

- Pre-2022 (The Chatbot Era): AI interaction was primarily characterized by single-turn prompt-and-response. Models were static and lacked the ability to interact with external tools or maintain complex state.

- 2023 (The ReAct Paradigm): Researchers introduced the "Reason + Act" (ReAct) framework, allowing LLMs to generate reasoning traces and task-specific actions in an interleaved manner. This was the first step toward true agency.

- Early 2024 (Framework Proliferation): Tools like LangChain and AutoGPT popularized the idea of "agents," but these systems often suffered from "infinite loops" and unpredictable behavior due to a lack of structured constraints.

- Late 2024 to Present (The Blueprint Era): The focus shifted toward "Cognitive Architectures." Frameworks like the one described in the Auton research emphasize modularity, portability, and formal validation. The use of YAML-based blueprints allows for "Agent Portability," where a single runtime can host multiple agent personalities by simply swapping a configuration file.

Comparative Performance and Portability

To demonstrate the efficacy of this modular approach, recent experiments compared different agent blueprints performing the same task: "Calculate 15% of 2,500 and tell me the result."

The "ResearchBot" blueprint, which emphasizes sequential planning and calculation tools, approached the task by first identifying the mathematical expression and then invoking the calculator tool. It produced a response focused on the methodology of the calculation. In contrast, the "DataAnalystBot," using a hierarchical strategy, treated the request as a statistical query, providing not just the answer but also a brief contextual analysis of the figure.

Despite their different behaviors, both agents utilized the same underlying runtime engine and tool registry. This portability is a breakthrough for developers, as it allows for the rapid scaling of AI fleets. A company could deploy dozens of specialized agents—one for HR, one for technical support, one for financial auditing—all running on the same stable infrastructure, differentiated only by their cognitive blueprints.

Industry Implications and Future Outlook

The transition to blueprint-driven AI agents has profound implications for the software industry and beyond. For developers, it means a shift from "prompt engineering" to "agent architecture." The ability to define an agent’s "DNA" in a YAML file makes AI systems more auditable and easier to version-control.

In the enterprise sector, these frameworks provide a solution to the "black box" problem. Because every step of the agent’s plan is recorded in an "Execution Trace," human supervisors can see exactly why an agent made a specific decision or used a particular tool. This level of transparency is essential for highly regulated industries such as finance, healthcare, and law.

Furthermore, the integration of a "Tool Registry" allows AI to move beyond the digital realm. By registering tools that interface with APIs for IoT devices, manufacturing systems, or cloud infrastructure, these agents can act as autonomous operators in physical and digital environments.

However, challenges remain. The "think-before-acting" loops and multiple validation checks increase the computational cost and latency of each interaction. As LLM providers move toward more efficient models, such as the "GPT-4o-mini" used in these demonstrations, the balance between reasoning depth and operational cost will continue to be a primary area of research.

Conclusion: A Foundation for Autonomous Innovation

The development of structured cognitive blueprints and modular runtime engines represents a maturation of the AI field. By treating AI behavior as a programmable, validatable, and portable asset, the industry is moving away from the unpredictability of early chatbots toward the reliability of autonomous systems. As demonstrated by the Auton framework, the combination of identity, planning, memory, and validation provides a robust foundation for the next generation of AI experimentation. This architectural shift ensures that as AI models become more powerful, they also become more controllable, specialized, and integrated into the complex workflows of the modern world.