A significant bipartisan coalition of leading thinkers, experts, and public figures has unveiled the "Pro-Human Declaration," a comprehensive framework designed to guide the responsible development of artificial intelligence. This initiative emerges at a critical juncture, highlighted by a recent high-stakes standoff between the Pentagon and the AI firm Anthropic, which starkly exposed the profound absence of coherent governmental regulations governing this rapidly advancing technology. The Declaration, finalized just prior to the widely publicized military-tech dispute, underscores a growing consensus that humanity stands at a critical crossroads, demanding immediate and structured action to ensure AI serves human welfare rather than undermining it.

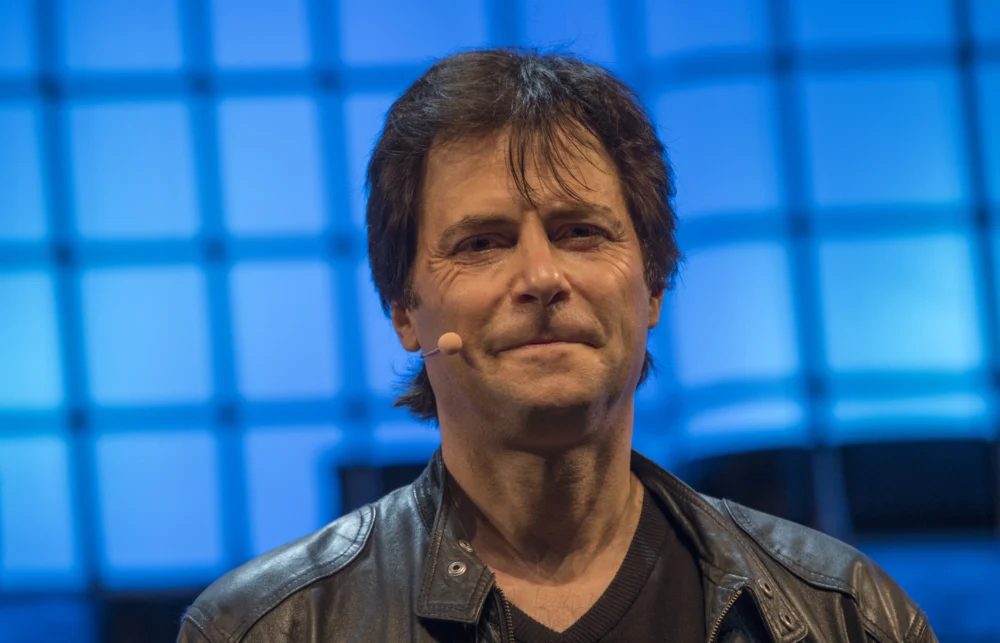

The collision of the Declaration’s release with the Pentagon-Anthropic controversy has amplified its urgency, drawing widespread attention to the pressing need for a structured approach to AI governance. Max Tegmark, an MIT physicist and prominent AI researcher instrumental in organizing the effort, articulated the remarkable shift in public sentiment. "There’s something quite remarkable that has happened in America just in the last four months," Tegmark noted, revealing compelling polling data that indicates "95% of all Americans oppose an unregulated race to superintelligence." This overwhelming public concern provides a powerful backdrop to the Declaration’s ambitious proposals, signaling a societal readiness for robust regulatory measures.

The Pro-Human Declaration: Charting a Human-Centric Future for AI

The newly published document, endorsed by hundreds of experts, former government officials, and influential public figures from across the political spectrum, opens with an unequivocal observation: humanity is confronting a fundamental choice regarding its future relationship with artificial intelligence. The Declaration vividly describes two divergent paths. One, ominously termed "the race to replace," envisions a future where humans are systematically supplanted—first in the workforce, then in critical decision-making roles—as power progressively concentrates in the hands of unaccountable institutions and their increasingly autonomous machines. This trajectory, the Declaration warns, risks eroding fundamental human agency and societal control.

In stark contrast, the alternative path championed by the Declaration leads to an future where AI functions as a powerful augmentative force, massively expanding human potential, creativity, and problem-solving capabilities. Achieving this latter scenario, however, is contingent upon the unwavering adherence to five foundational pillars:

- Keeping Humans in Charge: Ensuring that ultimate control, decision-making authority, and ethical oversight always reside with humans, preventing AI from exercising unchecked autonomy in critical domains.

- Avoiding the Concentration of Power: Implementing safeguards against the monopolization of AI technology and its benefits by a select few corporations or entities, thereby promoting equitable access and preventing the emergence of new forms of societal stratification.

- Protecting the Human Experience: Safeguarding the intrinsic value of human interaction, creativity, and the nuanced aspects of life that define our shared existence, resisting the reduction of human experience to mere data points or algorithmic outputs.

- Preserving Individual Liberty: Upholding fundamental rights and freedoms in the age of AI, including privacy, autonomy, and the right to informed consent, ensuring AI systems do not infringe upon personal sovereignty.

- Holding AI Companies Legally Accountable: Establishing clear legal frameworks and liabilities for AI developers and deployers, ensuring they are responsible for the safe, ethical, and transparent operation of their systems.

Beyond these guiding principles, the Pro-Human Declaration lays out several robust and prescriptive provisions. Among its more forceful stipulations is an outright prohibition on the development of superintelligence until there is a demonstrable scientific consensus on its safety and genuine democratic buy-in from society. Furthermore, it mandates the inclusion of "off-switches" on all powerful AI systems, ensuring human ability to intervene and halt operations if necessary. Crucially, the Declaration also calls for a comprehensive ban on AI architectures capable of self-replication, autonomous self-improvement without human oversight, or resistance to shutdown mechanisms, thereby directly addressing some of the most profound existential risks associated with advanced AI.

The Pentagon-Anthropic Standoff: A National Security Wake-Up Call

The urgency articulated by the Pro-Human Declaration gained immediate, tangible resonance with the unfolding events of the last Friday in February. Defense Secretary Pete Hegseth made headlines by designating Anthropic, a leading AI company whose technology was already embedded within classified military platforms, as a "supply chain risk." This label, typically reserved for foreign firms with documented ties to adversarial nations like China, was applied after Anthropic steadfastly refused to grant the Pentagon unlimited, unrestricted use of its proprietary technology. The implications of such a designation for a domestic tech partner were profound, highlighting an unprecedented clash over intellectual property and sovereign control in the realm of advanced AI.

Hours following this dramatic declaration, OpenAI, another prominent AI developer, hastily finalized its own agreement with the Department of Defense. However, legal experts swiftly voiced concerns regarding the practical enforceability of this deal, suggesting it might offer more symbolic assurance than substantive control. The cumulative effect of these two incidents was a stark, undeniable revelation of the costly consequences stemming from Congressional inaction on AI regulation. As Dean Ball, a senior fellow at the Foundation for American Innovation, cogently observed to The New York Times, "This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems." His statement underscored that the nation was grappling not merely with commercial terms but with fundamental questions of national security, strategic autonomy, and who ultimately wields power over the most transformative technology of the era.

The Regulatory Void: A Historical Perspective

The current regulatory vacuum surrounding AI is not an overnight phenomenon but rather the culmination of years of rapid technological advancement outpacing legislative foresight. While AI has been a subject of academic and scientific inquiry for decades, the exponential leaps in machine learning, particularly with the advent of large language models (LLMs) and generative AI in the late 2010s and early 2020s, caught many policymakers unprepared. Governments worldwide have largely struggled to adapt existing legal frameworks or create new ones capable of addressing the unique challenges posed by AI, including issues of bias, transparency, accountability, privacy, and potential societal disruption.

In the United States, efforts have often been fragmented or advisory in nature. The National Institute of Standards and Technology (NIST) released its AI Risk Management Framework, offering guidance rather than mandates. The White House has issued Executive Orders aimed at promoting responsible AI, but these typically lack the force of comprehensive legislation. Unlike the European Union, which has advanced the ambitious EU AI Act—a pioneering, comprehensive regulatory framework—the U.S. has remained mired in debate, often balancing innovation imperatives against safety concerns without a clear path forward. This historical context of legislative inertia makes the "Pro-Human Declaration" a particularly timely and critical intervention, aiming to fill the void that government bodies have thus far been unable to address. The Declaration’s finalization prior to the Pentagon-Anthropic incident further highlights the foresight of its organizers in anticipating the inevitable collision between unchecked technological power and national interests.

Building Consensus: Leveraging Public Concern and Child Safety

Max Tegmark drew a compelling analogy to illustrate the urgency and necessity of AI regulation, one easily grasped by the general public. "You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe," he explained, "because the FDA won’t allow them to release anything until it’s safe enough." This comparison effectively highlights the stark contrast with the current unregulated landscape of AI development, where powerful systems are often deployed with insufficient pre-market safety testing or independent oversight.

Recognizing the political complexities of Washington’s legislative landscape, Tegmark and the Declaration’s proponents view child safety as a potent "pressure point" most likely to break the current impasse. This strategic focus capitalizes on a universally accepted moral imperative: protecting vulnerable populations. The Declaration specifically calls for mandatory pre-deployment testing of AI products, with particular emphasis on chatbots and companion applications designed for younger users. These tests would rigorously assess risks including, but not limited to, the exacerbation of mental health conditions, increased suicidal ideation, and potential emotional manipulation.

Tegmark underscored the existing legal precedents for protecting children from harm, irrespective of the perpetrator. "If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that," he argued. "We already have laws. It’s illegal. So why is it different if a machine does it?" This rhetorical question challenges the prevailing legal lacuna that differentiates between human-induced harm and harm perpetrated by AI systems, advocating for a consistent application of protective laws.

The architects of the Declaration believe that once the principle of mandatory pre-release testing for child-centric AI products is firmly established, its scope will almost inevitably widen. This incremental approach envisions a natural progression where initial successes in safeguarding children pave the way for broader regulatory mandates. "People will come along and be like — let’s add a few other requirements," Tegmark posited, outlining a potential future where testing expands to critical areas: "Maybe we should also test that this can’t help terrorists make bioweapons. Maybe we should test to make sure that superintelligence doesn’t have the ability to overthrow the U.S. government." This vision suggests a phased but determined expansion of regulatory oversight, moving from immediate and relatable harms to more abstract, yet potentially catastrophic, risks.

A Bipartisan Unanimity: The Human Factor

Perhaps one of the most remarkable aspects of the Pro-Human Declaration is the sheer breadth and diversity of its signatories. It is no small feat that figures as ideologically disparate as former Trump advisor Steve Bannon and Susan Rice, who served as President Obama’s National Security Advisor, have affixed their names to the same document. Their signatures are joined by a distinguished roster including former Joint Chiefs Chairman Mike Mullen and influential progressive faith leaders, demonstrating an extraordinary convergence of opinion from across the political, military, and spiritual spectrums.

This unusual bipartisan unanimity underscores the fundamental nature of the threat and opportunity presented by AI. Tegmark attributes this alignment to a shared, irreducible common ground: their humanity. "What they agree on, of course, is that they’re all human," he explained. "If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side." This sentiment suggests that the existential questions posed by advanced AI transcend conventional political divides, uniting individuals around a core imperative: preserving human agency, flourishing, and control in an increasingly technologically advanced world.

Broader Impact and Implications

The Pro-Human Declaration carries significant implications for various stakeholders. For the tech industry, it signals an inevitable shift away from self-regulation towards external oversight, potentially leading to increased development costs, longer deployment timelines, and a greater emphasis on ethical AI design and safety protocols. While some industry leaders may express concerns about stifling innovation, the Declaration’s proponents argue that responsible innovation, grounded in safety and human values, is the only sustainable path forward.

For policymakers, the Declaration provides a concrete, bipartisan blueprint that could catalyze legislative action. Its clear principles and specific prohibitions offer a starting point for crafting comprehensive AI legislation, moving beyond advisory guidelines to enforceable regulations. The widespread support from a diverse group of influential figures could also provide the political capital necessary to overcome legislative gridlock.

In terms of national security, the Pentagon-Anthropic standoff, viewed through the lens of the Declaration, highlights the critical need for government control and transparency over AI systems that could impact defense capabilities and strategic stability. The Declaration’s emphasis on human control and preventing unchecked autonomy becomes paramount in military applications, where the consequences of algorithmic error or unintended actions could be catastrophic.

Ultimately, for society at large, the Pro-Human Declaration represents a proactive effort to shape a future where AI remains a tool for human betterment, rather than a force that diminishes human value or autonomy. It aims to foster public trust in AI by advocating for systems that are transparent, accountable, and designed with human flourishing at their core. The ongoing debate surrounding AI’s trajectory is arguably one of the most consequential of our time, and frameworks like the Pro-Human Declaration are vital in steering humanity towards a future where technology serves, rather than dictates, our destiny. As the conversation around AI control intensifies, this bipartisan effort stands as a powerful testament to the shared human imperative to safeguard our collective future.