The traditional trajectory of large language model (LLM) development has long been dictated by the Chinchilla scaling laws, which suggest that superior performance is a direct function of increasing three primary variables: total floating-point operations (FLOPs), parameter counts, and the volume of training tokens. However, as the industry transitions from the initial excitement of model creation to the practicalities of massive-scale deployment, a significant bottleneck has emerged. Modern inference demands are consuming an ever-larger portion of global compute resources, and the push for "edge AI"—running sophisticated models on local hardware like smartphones and laptops—is constrained by strict memory footprints.

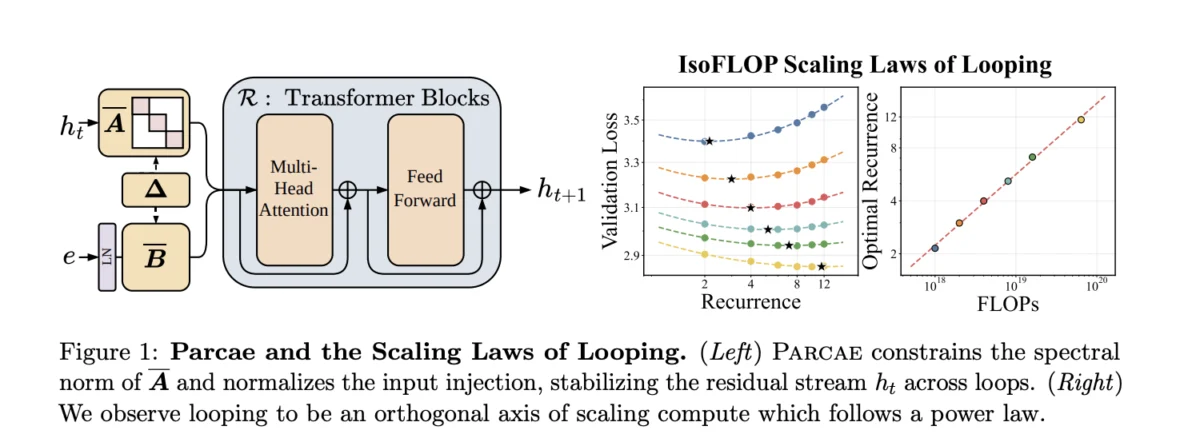

In response to these constraints, a collaborative research team from the University of California, San Diego (UCSD) and Together AI has unveiled Parcae. This novel architecture represents a fundamental shift in how model "depth" and "quality" are conceptualized. By utilizing a stable looped transformer design, Parcae allows a model to achieve the performance of a traditional Transformer twice its size without increasing its parameter count or memory requirements. This breakthrough addresses one of the most persistent challenges in neural architecture search: scaling computational quality independently of memory growth.

The Evolution of Weight Sharing and Looped Architectures

To understand the significance of Parcae, one must look at the history of architectural efficiency in deep learning. The concept of "weight sharing"—reusing the same parameters across different parts of a network—is not entirely new. It has its roots in Recurrent Neural Networks (RNNs) and was later explored in the context of Transformers through initiatives like ALBERT (A Lite BERT). The core idea of a "looped" model is to route activations through a specific block of layers multiple times (represented as T iterations) rather than passing them through a long, linear stack of unique layers.

While theoretically sound, previous attempts to implement looped Transformers, most notably Recurrent Depth Models (RDMs), faced severe stability issues. These models often suffered from "residual state explosion," a phenomenon where the hidden state vector grows uncontrollably as it passes through successive loops. This instability led to frequent loss spikes during training, making the models notoriously difficult to converge without meticulous and fragile hyperparameter tuning. Parcae was developed specifically to solve these stability issues by applying principles from classical control theory to the transformer architecture.

Architectural Framework: The Prelude, Recurrent Block, and Coda

The Parcae architecture departs from the standard "stack of blocks" design in favor of a tripartite functional structure. This "middle-looped" design ensures that the model remains compact while maximizing the utility of its parameters.

- The Prelude (P): The initial stage of the model responsible for embedding the input sequence. It transforms the raw input into a latent state, which serves as the foundation for the subsequent iterative processing.

- The Recurrent Block (R): This is the heart of the Parcae system. Instead of a fixed depth, activations are cycled through this block T times. Crucially, the initial latent state from the Prelude is re-injected at every iteration. This "input injection" ensures that the original context of the sequence remains influential and prevents the model from "forgetting" the input as the hidden state evolves through the loops.

- The Coda (C): After the Recurrent Block completes its designated iterations, the final hidden state is passed to the Coda. This block performs the final processing necessary to produce the model’s output, such as predicting the next token in a sequence.

By partitioning the model this way, Parcae maintains a small memory footprint—governed by the parameters in P, R, and C—while effectively behaving like a much deeper model during the forward pass.

Solving the Stability Crisis Through Control Theory

The primary innovation of the UCSD and Together AI team lies in their mathematical approach to training stability. They recast the forward pass of a looped model as a nonlinear time-variant dynamical system. By analyzing the residual stream through the lens of a discrete linear time-invariant (LTI) system, the researchers identified the "spectral norm" as the key to stability.

In control theory, a system is considered stable if its spectral norm (denoted as ρ(Ā)) is less than one. The researchers discovered that prior models like RDMs failed because their architectures inherently allowed the spectral norm to exceed this threshold. Specifically, the common practice of using addition-based input injection created a "marginally stable" system (ρ(Ā) = 1), while concatenation-based methods left the system entirely unconstrained, often leading to ρ(Ā) > 1 and subsequent training divergence.

Parcae enforces stability by design through a process of discretization borrowed from modern State Space Models (SSMs) like Mamba. By using zero-order hold (ZOH) and Euler schemes with a learned step size, and by constraining the internal matrices to be negative diagonal, Parcae ensures that the spectral norm remains below one at all times. This "stability-by-construction" eliminates the loss spikes that plagued previous looped models, allowing for smooth training even at large scales.

Empirical Performance and Benchmark Results

The researchers subjected Parcae to rigorous testing against both prior looped models and standard fixed-depth Transformer baselines. The results indicate a significant leap in efficiency across various datasets, including Huginn and FineWeb-Edu.

In head-to-head comparisons with RDMs, Parcae reduced validation perplexity by up to 6.3% at the 350M parameter scale. On the WikiText benchmark, the improvement was even more pronounced, reaching a 9.1% gain. Beyond perplexity, the model showed tangible improvements in zero-shot downstream tasks, outperforming its predecessors by an average of 1.8 points.

When measured against standard Transformers—the current industry workhorse—Parcae’s efficiency becomes even more apparent. A 770M parameter Parcae model achieved a "Core" score of 25.07, nearly matching the 25.45 score of a 1.3B parameter standard Transformer. Essentially, Parcae delivers the capability of a model with nearly double the parameters. The research team quantified this by stating that Parcae achieves roughly 87.5% of the quality of a Transformer twice its size, while using half the memory for its weights.

Establishing the First Scaling Laws for Layer Looping

One of the most significant contributions of this research is the establishment of predictable scaling laws for looped architectures. Until now, scaling laws were largely confined to the relationship between parameters, data, and compute in linear models. The Parcae team conducted isoFLOP experiments to determine how to optimally allocate compute in a looped environment.

Their findings suggest that as the training FLOP budget increases, the optimal number of loop iterations (mean recurrence) and the volume of training tokens should increase in tandem. They discovered that the optimal mean recurrence scales as C^0.40, while optimal tokens scale as C^0.78 (where C represents the total compute budget). This provides a blueprint for future researchers to build larger looped models with mathematical certainty rather than trial and error.

Furthermore, the team explored "test-time scaling"—the ability to increase the number of loops during inference to improve performance. They found that while increasing loops beyond the training depth provides some gains, these gains follow a "saturating exponential decay." This means that while you can squeeze extra performance out of Parcae at inference time by looping more, the training depth ultimately sets a "floor" for the model’s error rate.

Chronology of Development and Research Context

The development of Parcae comes at a pivotal moment in AI research. Following the 2022-2023 "arms race" for the largest possible models (exemplified by GPT-4 and Claude), 2024 and 2025 have seen a shift toward "small language models" (SLMs) and efficiency.

- Phase 1: The Chinchilla Era (2022): DeepMind establishes that many models are undertrained and that scaling data is as important as scaling parameters.

- Phase 2: The Efficiency Push (2023): Techniques like Quantization (bitsandbytes) and Low-Rank Adaptation (LoRA) become standard for making large models runnable on consumer hardware.

- Phase 3: Architectural Innovation (2024): Researchers begin looking beyond the standard Transformer block, leading to the rise of Mamba, Jamba, and now Parcae.

- Phase 4: Parcae Introduction (Present): UCSD and Together AI solve the stability issues of recurrent depth, providing a stable path for parameter-efficient scaling.

Industry Implications and Future Outlook

The introduction of Parcae has far-reaching implications for both AI researchers and hardware manufacturers. For companies like Together AI, which focuses on providing accessible, high-performance AI infrastructure, Parcae offers a way to deliver "large-model performance" at "small-model costs."

For the broader industry, Parcae signals a move toward models that are more "compute-dense." As inference becomes the dominant cost in the AI lifecycle, the ability to reuse weights through looping becomes an attractive alternative to maintaining massive, multi-hundred-billion parameter models. This is particularly relevant for mobile device manufacturers (Apple, Samsung, Google), who are currently struggling to fit powerful LLMs into the limited RAM of smartphones.

The success of Parcae also suggests that the future of neural architecture may lie in a hybrid approach—combining the attention mechanisms of Transformers with the stability and recurrence of dynamical systems. As researchers continue to refine these scaling laws, the industry may soon see a new generation of "elastic" models that can adjust their "depth" (and thus their accuracy and latency) on the fly based on the complexity of the user’s prompt.

In conclusion, Parcae stands as a robust proof-of-concept that memory footprint does not have to be a hard ceiling for model quality. By enforcing stability through rigorous mathematical constraints, the team from UCSD and Together AI has opened a new frontier in the quest for efficient, scalable artificial intelligence.