The rapid proliferation of generative artificial intelligence has highlighted a critical vulnerability in modern large language models (LLMs): the tendency to present incorrect information with absolute certainty. To address this phenomenon, often referred to as "hallucination," a new architectural framework has emerged that prioritizes uncertainty awareness, self-evaluation, and dynamic information retrieval. This approach moves beyond simple prompt-and-response mechanics, instead implementing a multi-stage reasoning pipeline designed to estimate confidence levels and bridge knowledge gaps through automated web research. By integrating these layers, developers are creating systems that not only provide answers but also offer a transparent assessment of their own reliability, fundamentally altering the interaction between human users and autonomous agents.

The Challenge of LLM Overconfidence and the Need for Calibration

As LLMs have become integrated into enterprise decision-making, the risks associated with factual errors have escalated. Industry data from various AI safety benchmarks suggest that even the most advanced models can maintain high confidence scores while delivering factually incorrect data, particularly regarding niche topics or events occurring after their training cutoff. This "calibration" problem—where a model’s predicted probability of being correct does not align with its actual accuracy—has become a primary hurdle for high-stakes applications in legal, medical, and financial sectors.

Traditional RAG (Retrieval-Augmented Generation) systems attempt to solve this by providing the model with external documents. However, static RAG often suffers from "noise" or irrelevant data injection. The uncertainty-aware framework presented in recent technical developments offers a more surgical solution: the model only triggers external searches when it acknowledges that its internal knowledge is insufficient. This selective retrieval reduces computational costs and minimizes the risk of the model being confused by extraneous web data.

A Chronology of Reasoning Evolution: From Completion to Agency

The transition toward uncertainty-aware systems represents the third major era in LLM development.

- The Completion Era (2020–2022): Early models like GPT-3 focused on probabilistic word prediction. These systems were highly prone to rambling and had no internal mechanism to verify facts.

- The Instruction and Reasoning Era (2023–2024): The introduction of Reinforcement Learning from Human Feedback (RLHF) and "Chain of Thought" prompting allowed models to follow complex instructions and "think" through problems step-by-step.

- The Agentic and Uncertainty Era (2024–Present): Current developments focus on "agentic" workflows, where the model acts as its own supervisor. The framework discussed here utilizes this meta-cognitive ability to perform self-critiques and decide when to interact with external tools, such as search engines.

This evolution marks a shift from viewing the LLM as a static encyclopedia to viewing it as a reasoning engine capable of identifying its own boundaries.

Architectural Breakdown: The Three-Stage Pipeline

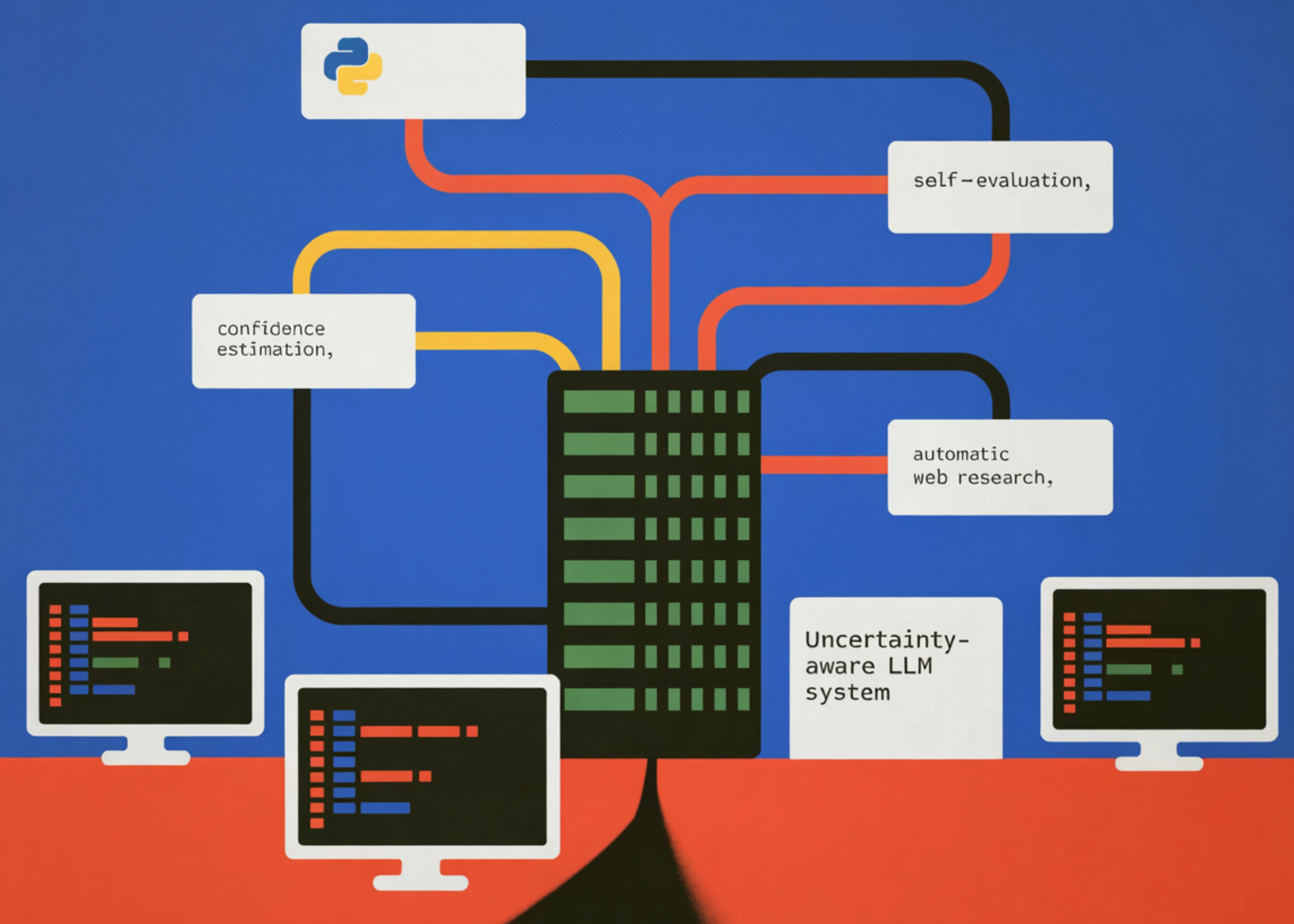

The uncertainty-aware system is built upon a modular Python-based architecture, utilizing the OpenAI API for reasoning and the DuckDuckGo Search (DDGS) library for real-time data acquisition. The pipeline is structured into three distinct phases: Initial Generation, Self-Evaluation, and Synthesis.

Stage 1: Calibrated Generation

In the first stage, the system is constrained to respond exclusively in structured JSON format. This is a technical requirement that ensures the model’s "meta-data"—its confidence score and reasoning—can be programmatically parsed and acted upon. The system prompt defines a strict confidence scale, ranging from 0.0 to 1.0. For instance, a score of 0.90–1.00 indicates a well-established fact, while anything below 0.55 is flagged as a "best guess" or significant uncertainty.

By forcing the model to verbalize its reasoning and assign a numerical value to its certainty, developers can implement "guardrails." If the model identifies a knowledge gap (e.g., a recent event or a niche technical detail), it records this in the reasoning field of the JSON object, providing a breadcrumb trail for the subsequent stages of the pipeline.

Stage 2: Meta-Cognitive Self-Evaluation

The second stage introduces a "self-critic" module. The model is presented with its own initial answer and reasoning and is tasked with finding logical inconsistencies or potential factual errors. This simulates a human-like "double-check" process. During this phase, the model may revise its confidence score downward if it detects a flaw in its initial logic.

Technical analysis of this stage reveals that using a lower "temperature" setting (e.g., 0.1) is crucial. A lower temperature makes the model more deterministic and conservative, which is ideal for a critical review process. This stage serves as a filter, preventing the system from proceeding with a high-confidence score if the underlying logic is shaky.

Stage 3: Automated Research and Synthesis

The final stage is conditionally triggered. If the revised confidence score falls below a predefined threshold (typically 0.55), the system automatically initiates a web research phase. Using search snippets retrieved from live sources, the system performs a final synthesis.

In this phase, the model acts as a research assistant, weighing the evidence found online against its preliminary answer. The final output is an improved, evidence-grounded response that includes a list of sources. This ensures that for topics where the model’s training data is outdated—such as current population statistics or the latest software releases—the user receives the most current information available on the open web.

Supporting Data: The Impact of Self-Correction on Accuracy

Empirical studies on LLM self-correction have shown varied results, but when combined with external search, the accuracy gains are substantial. According to recent benchmarks in the field of AI reliability, models that utilize a "Verify-and-Edit" framework see a 15% to 25% increase in factual accuracy on "long-tail" knowledge questions compared to standard zero-shot prompting.

Furthermore, the implementation of a confidence threshold significantly reduces the "false-positive" rate in information delivery. By explicitly labeling answers as "Low Confidence," the system manages user expectations and prompts human verification where it is most needed. In a batch run of demo questions—ranging from the speed of light (high confidence) to 2025 population statistics (initially low confidence)—the system demonstrates a clear ability to distinguish between static universal truths and dynamic, time-sensitive data.

Industry Implications and Expert Perspectives

The shift toward uncertainty-aware AI is being closely watched by industry leaders. Analysts suggest that this framework is a precursor to more autonomous "AI Agents" that will eventually handle complex workflows in corporate environments.

"The ability for a model to say ‘I don’t know, let me check’ is more valuable to an enterprise than a model that is right 90% of the time but lies the other 10%," notes one industry report on AI transparency. This sentiment reflects a broader move in the tech sector toward "Explainable AI" (XAI), where the internal logic of a machine learning model is made accessible and understandable to human operators.

The use of JSON as a primary communication format between these stages also points to a future where AI components are highly interoperable. By standardizing how uncertainty is communicated, different AI models (e.g., a specialized medical LLM and a general-purpose reasoning model) could theoretically collaborate on a single query, passing confidence scores and critiques back and forth to reach an optimal conclusion.

Broader Impact on AI Transparency and Decision Support

The implications of this technology extend beyond software development into the realms of ethics and digital literacy. As AI-generated content continues to saturate the internet, the ability for users to see a "confidence meter" alongside an answer provides a necessary tool for critical thinking.

For decision-support systems—such as those used by engineers or policy analysts—the reasoning field provided by the LLM offers a qualitative look into the model’s "thought process." If the model cites a specific knowledge gap, the human user can provide the missing information, creating a "human-in-the-loop" workflow that is far more efficient than traditional trial-and-error prompting.

Moreover, the integration of automated research via tools like DuckDuckGo ensures that AI systems remain relevant in a rapidly changing world. By acknowledging their knowledge cutoff and actively seeking new data, these systems mitigate the "stale data" problem that has plagued LLMs since their inception.

Conclusion and Future Outlook

The development of uncertainty-aware LLM systems represents a significant milestone in the quest for reliable artificial intelligence. By combining calibrated confidence estimation, rigorous self-evaluation, and dynamic web research, the framework provides a practical blueprint for building systems that are both intelligent and humble.

As AI models continue to grow in complexity, the focus will likely shift from increasing parameters to refining these agentic workflows. Future iterations may include more sophisticated search strategies, such as multi-step "deep research" where the model navigates through multiple web pages to find a specific data point. For now, the implementation of a three-stage reasoning pipeline offers a robust solution for developers seeking to build trustworthy, transparent, and adaptive AI applications. This approach does not just aim for better answers; it aims for a better understanding of the limits of machine knowledge, ensuring that AI serves as a reliable partner in human decision-making.