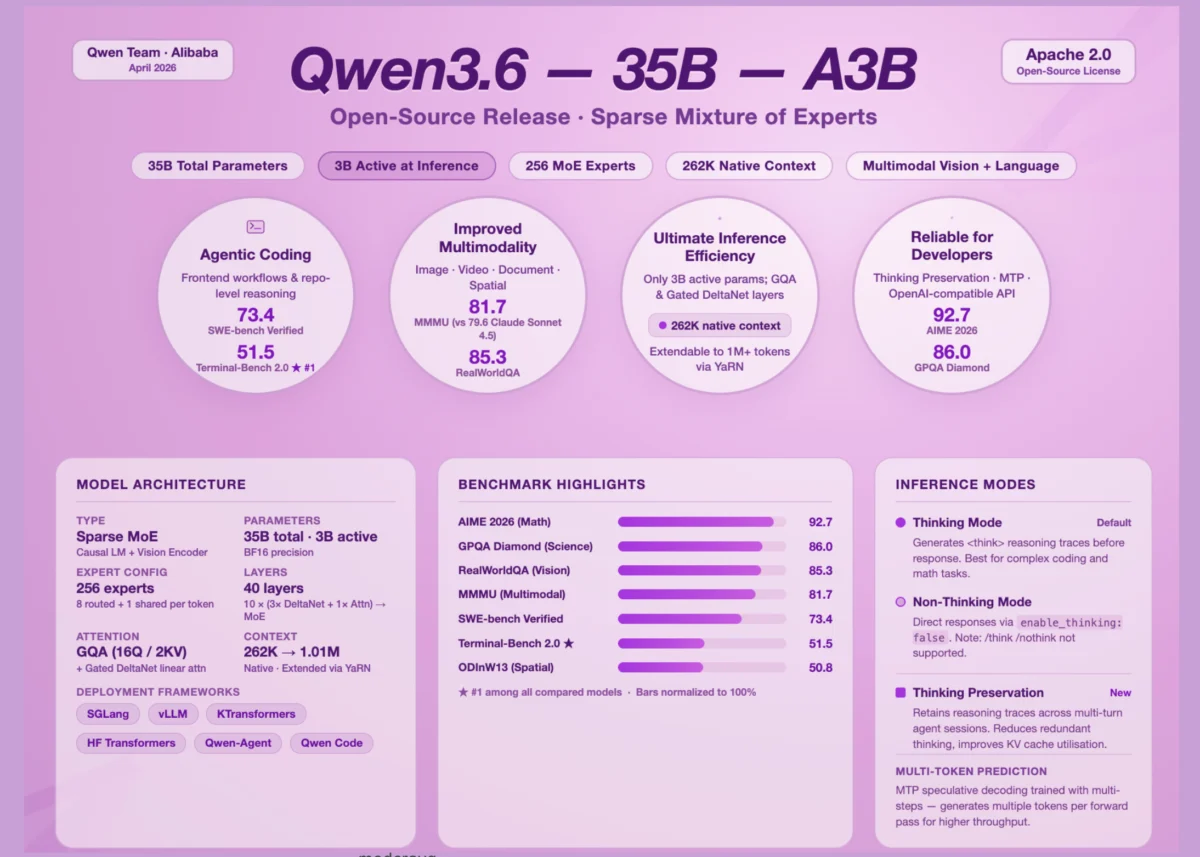

The open-source artificial intelligence ecosystem has reached a significant milestone with the release of Qwen3.6-35B-A3B, the first open-weight model from the Qwen3.6 generation developed by Alibaba’s Qwen team. This release signals a strategic shift in the industry toward parameter efficiency, demonstrating that a model’s total size is becoming a less critical metric than its active computational footprint during inference. By utilizing a Sparse Mixture of Experts (MoE) architecture, the model maintains a massive 35-billion-parameter knowledge base while activating only 3 billion parameters for any given task. This configuration allows the model to deliver performance in agentic coding and multimodal reasoning that rivals dense models ten times its active size, fundamentally altering the cost-to-performance ratio for developers and enterprises.

The Evolution of the Qwen Series: A Chronological Context

The arrival of Qwen3.6-35B-A3B follows an aggressive development cycle by Alibaba Cloud, which has consistently pushed the boundaries of the open-source community. The Qwen series first gained global attention with the release of Qwen1.5 and Qwen2.0, which established Alibaba as a serious competitor to Meta’s Llama and Mistral AI’s offerings. By late 2024, the release of Qwen2.5 introduced significant improvements in coding and mathematics.

In early 2025, the team accelerated its iteration speed with the Qwen3.5 series, which integrated more sophisticated reasoning capabilities. The jump to the 3.6 generation, represented by this latest 35B-A3B variant, focuses on refining the Mixture of Experts (MoE) framework and enhancing "agentic" behaviors—tasks where the AI must operate independently within a digital environment, such as a terminal or a codebase, to solve complex, multi-step problems. This release reflects a broader industry trend where the focus is shifting from general-purpose chat to specialized, high-efficiency agents capable of autonomous technical work.

Technical Architecture: Engineering Efficiency through Sparse MoE

At the core of Qwen3.6-35B-A3B is a Causal Language Model integrated with a Vision Encoder, designed to handle both textual and visual data natively. The architecture is a Sparse MoE model, a design choice that addresses the "memory-wall" and "compute-ceiling" challenges currently facing AI deployment. In a traditional dense model, every parameter is activated for every token generated. In contrast, an MoE model routes input tokens through specific "experts"—specialized sub-networks—leaving the majority of the model’s parameters idle.

The Qwen3.6-35B-A3B configuration features 256 experts in total. For each token, the system activates one shared expert and eight routed experts. This ensures that while the model has the breadth of a 35-billion-parameter system, the inference cost and latency remain proportional to a 3-billion-parameter model. This makes it feasible to run high-performance AI on consumer-grade hardware or reduce operational costs in cloud environments by an order of magnitude.

Furthermore, the model introduces a sophisticated hidden layout. It utilizes 40 layers divided into blocks of 10. Each block follows a specific sequence: three instances of Gated DeltaNet paired with MoE, followed by one instance of Gated Attention paired with MoE. The Gated DeltaNet sublayers implement linear attention, which is computationally more efficient than standard self-attention mechanisms. For more complex dependencies, the Gated Attention sublayers employ Grouped Query Attention (GQA). By using 16 attention heads for queries and only 2 for Key-Value (KV) pairs, the model significantly reduces KV-cache memory pressure, allowing for longer context windows without a proportional increase in VRAM requirements.

The model’s native context length is 262,144 tokens. However, leveraging YaRN (Yet another RoPE extensioN) scaling, this can be extended to over 1,000,000 tokens. This capability is crucial for "agentic" tasks, where the model must ingest entire repositories of code or long technical documents to provide accurate solutions.

Benchmark Analysis: Dominating Agentic Coding and STEM

The performance of Qwen3.6-35B-A3B in specialized benchmarks highlights its superiority over significantly larger dense models. In the realm of "agentic coding"—the ability of a model to act as an autonomous software engineer—the model has set new records for its size class. On SWE-bench Verified, a rigorous benchmark that requires models to resolve real-world GitHub issues, Qwen3.6-35B-A3B achieved a score of 73.4. This outperforms its predecessor, Qwen3.5-35B-A3B (70.0), and dwarfs the performance of Google’s Gemma4-31B (52.0).

The model’s proficiency in live environments is further evidenced by its performance on Terminal-Bench 2.0. This benchmark evaluates an AI’s ability to complete tasks within a functional terminal under a three-hour time limit. Qwen3.6-35B-A3B scored 51.5, the highest among all tested models, including larger variants like Qwen3.5-27B (41.6) and Gemma4-31B (42.9).

In frontend development, the improvements are even more pronounced. On QwenWebBench—an internal benchmark covering web design, applications, SVG generation, and 3D animations—the model scored 1397. This represents a massive leap over the Qwen3.5-35B-A3B score of 978, suggesting that the 3.6 generation has gained a much deeper understanding of visual and structural code relationships.

The model’s reasoning capabilities extend into graduate-level science and advanced mathematics. It recorded a 92.7 on AIME 2026 (American Invitational Mathematics Examination) and an 86.0 on GPQA Diamond. These scores are competitive with "frontier" models like GPT-4o and Claude 3.5 Sonnet, despite the Qwen model having a fraction of the active parameters.

Multimodal Capabilities: Bridging Vision and Reasoning

Qwen3.6-35B-A3B is not limited to text and code; its vision encoder allows it to process images, videos, and spatial data with high precision. In the MMMU (Massive Multi-discipline Multimodal Understanding) benchmark, which tests university-level reasoning across various visual disciplines, the model scored 81.7. This result is particularly notable as it surpasses Claude-Sonnet-4.5 (79.6) and Gemma4-31B (80.4).

In practical, real-world visual understanding, the model achieved an 85.3 on RealWorldQA, outperforming several closed-source competitors. Its spatial intelligence was tested via the ODInW13 object detection benchmark, where it scored 50.8, a significant improvement over the 42.6 scored by the previous generation. Video understanding also saw gains, with a score of 83.7 on VideoMMMU, confirming the model’s utility in analyzing dynamic visual data for security, media, and research applications.

Behavioral Innovations: Thinking Modes and Context Preservation

A defining feature of the Qwen3.6 generation is the introduction of explicit "Thinking Modes." Following the industry trend toward Chain-of-Thought (CoT) reasoning, the model is designed to generate internal reasoning traces before providing a final answer. By default, the model operates in this thinking mode, enclosing its logic within <think> tags. This process allows the model to "self-correct" and explore multiple paths to a solution before committing to an output.

For developers who require lower latency or direct responses, Alibaba has provided an API-level toggle. By setting enable_thinking: False in the chat template, users can bypass the reasoning phase. However, the Qwen team has noted a departure from previous versions: the "soft switches" such as /think or /nothink prompt commands used in Qwen3 are no longer officially supported in 3.6. Control has been moved entirely to the API level to ensure more robust system behavior.

Perhaps the most innovative addition is "Thinking Preservation." In standard LLM interactions, the reasoning steps for previous messages are often discarded to save space. Qwen3.6-35B-A3B can be configured to retain and leverage these historical "thinking traces." By enabling preserve_thinking, the model maintains a continuous chain of logic across a multi-turn conversation. This is particularly beneficial for agentic scenarios where an AI must remember why it made a specific decision five steps ago to ensure consistency in its current action. This feature also improves KV-cache utilization, making long-term reasoning more efficient.

Industry Implications and Global Response

The release of Qwen3.6-35B-A3B has drawn praise from the open-source community, particularly for Alibaba’s commitment to "open-weight" releases. Unlike "open-source" in the traditional software sense, "open-weight" means the trained parameters are available for download and local hosting, though the full training dataset and pipeline may remain proprietary.

Industry analysts suggest that this model poses a direct challenge to Western AI dominance. By providing a model that is highly efficient yet performs at the level of the world’s most expensive closed-source systems, Alibaba is empowering a new wave of localized AI development. Startups and researchers can now deploy "agent-grade" AI on local servers without the privacy concerns or costs associated with US-based API providers.

The reaction from the developer community has been focused on the model’s coding prowess. Early testers on platforms like Hugging Face have noted that the model’s ability to handle complex Python environments and terminal commands makes it a prime candidate for integration into IDE extensions and autonomous DevOps tools.

Broader Impact: The Shift Toward Agentic AI

The launch of Qwen3.6-35B-A3B marks a transition point in AI development. We are moving away from "chatbots" and toward "agents." The distinction lies in the model’s ability to interact with tools, reason through failures, and operate within the constraints of a real-world environment. With its high scores on Terminal-Bench and SWE-bench, Qwen3.6 is positioning itself as the engine for the next generation of autonomous software tools.

Furthermore, the success of the 3B-active parameter configuration validates the Sparse MoE approach as the most viable path forward for scaling AI. It suggests that future models may have trillions of total parameters but will only use a tiny fraction for any specific request, allowing for "omni-capable" models that remain fast and affordable.

As Alibaba Cloud continues to release the remaining models in the Qwen3.6 lineup, the industry will be watching closely to see if this level of efficiency can be maintained at even larger scales. For now, Qwen3.6-35B-A3B stands as a testament to the power of architectural innovation over brute-force scaling, offering a high-performance, multimodal, and agentic tool to the global AI community.