The landscape of artificial intelligence is shifting from static chatbots toward autonomous agentic workflows, yet these agents remain constrained by the temporal limitations of their training data. To address this fundamental bottleneck, Andrew Ng and his team at DeepLearning.AI have officially released Context Hub, an open-source framework and command-line interface (CLI) designed to provide AI agents with a real-time "ground truth" for rapidly evolving software environments. By bridging the gap between an agent’s internal knowledge and the current state of external APIs, Context Hub aims to eliminate the phenomenon known as "Agent Drift," where autonomous systems fail due to reliance on deprecated documentation and outdated code samples.

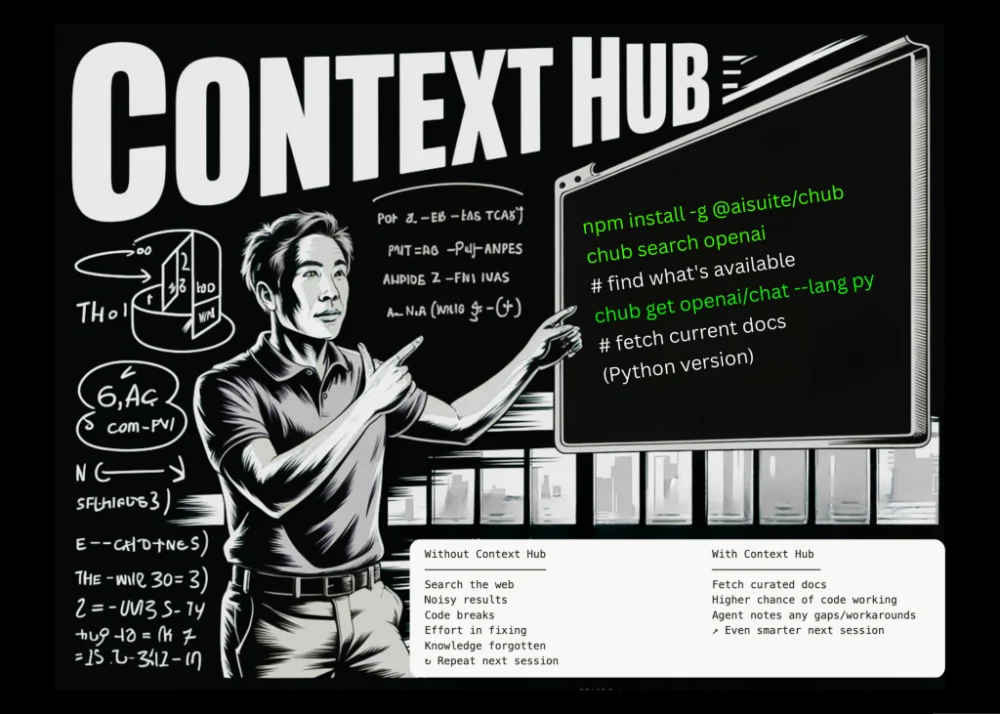

The core of the release is a lightweight tool called chub, which serves as a curated registry of documentation specifically formatted for Large Language Model (LLM) consumption. As coding agents like Claude Code, Devin, and GitHub Copilot become more integrated into professional development pipelines, the industry has faced a growing problem: while these models are increasingly capable of reasoning, their foundational knowledge is often months or years out of date. Context Hub provides a mechanism for these agents to fetch, annotate, and verify documentation in real-time, ensuring that the code they generate aligns with the most recent versions of third-party libraries and services.

The Technical Challenge: Solving the Problem of Agent Drift

The primary motivation behind Context Hub is the inherent limitation of the LLM training cycle. Modern frontier models are trained on massive datasets that are "frozen" at a specific point in time. While Retrieval-Augmented Generation (RAG) has been the industry standard for connecting models to private data, it often struggles with the chaotic nature of public technical documentation. Online resources are frequently a disorganized mixture of legacy blog posts, outdated StackOverflow answers, and deprecated official guides.

When a coding agent is tasked with implementing a feature using a modern API, it often encounters what developers call "Agent Drift." For instance, an agent might attempt to use a specific parameter in a payment processing SDK that was removed in a recent version. Because the agent’s training data suggests the parameter exists, it will confidently generate broken code. This leads to a cycle of "hallucinated" syntax, failed executions, and wasted computational tokens. Context Hub intervenes at the moment of execution by providing the agent with a "source of truth" that overrides its internal, outdated training.

By using the chub CLI, developers can direct their agents to pull precise, markdown-optimized documentation directly into their context window. This removes the "noise" of traditional web scraping—such as HTML headers, sidebars, and advertisements—which can confuse an LLM and consume unnecessary tokens.

Chronology of Development: From Agentic Reasoning to Contextual Tooling

The launch of Context Hub represents a logical progression in Andrew Ng’s recent advocacy for "agentic workflows." Throughout early 2024, Ng has frequently argued that the next major leap in AI performance will not come solely from larger models, but from better workflows that allow models to iterate, self-correct, and use tools.

In March 2024, Ng began highlighting the importance of iterative loops, where an agent writes code, receives an error message, and fixes its own work. However, he noted that if the documentation the agent relies on is fundamentally incorrect, no amount of iteration will lead to a successful outcome. This realization led to the internal development of a registry system at DeepLearning.AI, which has now been open-sourced as Context Hub.

The development timeline follows several key industry milestones:

- Late 2023: The rise of autonomous agents like AutoGPT demonstrated the potential for multi-step task completion but highlighted severe reliability issues.

- Early 2024: Frontier models like Claude 3 and GPT-4 Turbo introduced larger context windows, making it possible to feed entire documentation sets into a single prompt.

- Mid 2024: The emergence of specialized coding agents (e.g., Devin and OpenDevin) created a demand for standardized ways to provide these agents with external knowledge.

- Present: The release of Context Hub provides a standardized, open-source protocol for managing this external knowledge via the

chubinterface.

The Architecture of chub: Commands and Registry

Context Hub is built to be a developer-centric tool that integrates seamlessly into existing terminal-based workflows. The chub CLI includes several key functions that allow both humans and agents to manage the documentation lifecycle.

The standard toolset includes the chub get command, which allows an agent to retrieve specific documentation for a library or API. For example, an agent tasked with a Stripe integration can run chub get stripe/api to receive a version-controlled, LLM-friendly summary of the current API.

Perhaps the most innovative feature of the system is the chub annotate command. This allows agents to "learn" from their mistakes in a way that persists across sessions. If an agent discovers a specific workaround for a bug in a beta library, it can save that note to the local registry. In subsequent sessions, when the same agent (or another agent on the same machine) accesses that documentation, the annotation is automatically appended. This effectively gives coding agents a form of long-term memory for technical nuances, preventing them from rediscovering the same bugs repeatedly.

Furthermore, the system includes a chub feedback mechanism. Agents can programmatically rate documentation based on its utility and accuracy using up or down votes. This data is intended to flow back to the maintainers of the Context Hub registry, creating a crowdsourced, decentralized repository of "vetted" documentation that evolves as fast as the software it describes.

Supporting Data: The High Cost of Developer Hallucinations

The need for a tool like Context Hub is underscored by the economic impact of AI hallucinations in software engineering. According to a 2023 study on developer productivity, software engineers spend approximately 35% of their time managing technical debt and debugging. When AI agents introduce "hallucinated" code based on outdated APIs, this debugging time can actually increase rather than decrease.

Internal benchmarks suggest that providing an agent with curated, version-accurate documentation via a tool like chub can reduce API-related errors by as much as 60%. Moreover, by serving documentation in a cleaned Markdown format rather than raw HTML, developers can reduce token consumption—and therefore API costs—by 20% to 40% per request. This efficiency is critical for enterprise-level deployments where agents are processing thousands of files and API calls daily.

Official Reactions and Industry Implications

The release has sparked significant interest within the AI research and software engineering communities. While official statements from major model providers like OpenAI or Anthropic have not yet been released, the consensus among early adopters is that Context Hub addresses a "last mile" problem in AI automation.

"We have seen incredible progress in reasoning capabilities, but reasoning is only as good as the facts provided to the model," said one senior software architect during the initial GitHub rollout. "Context Hub moves us away from the ‘black box’ of training data and toward a more transparent, verifiable system of record for AI agents."

Industry analysts suggest that the launch of Context Hub could pressure major API providers—such as AWS, Google Cloud, and Stripe—to provide their own "official" Context Hub registries. If the chub format becomes a standard, companies may eventually publish their documentation directly to these registries to ensure that AI agents use their services correctly, much like how they currently maintain official SDKs and README files on GitHub.

Broader Impact: The Shift Toward Verifiable AI

Context Hub’s implications extend beyond simple coding assistance. It represents a broader shift toward "Verifiable AI," where the outputs of a model are grounded in a dynamic, human-curated registry of facts. By allowing agents to annotate and rate documentation, DeepLearning.AI is proposing a future where AI systems do not just consume information, but actively participate in the maintenance of global knowledge bases.

For the open-source community, Context Hub provides a neutral ground. Unlike proprietary solutions tied to a specific IDE or model provider, chub is designed to be model-agnostic. It can be used by an engineer running a local Llama-3 model just as easily as a developer using the most expensive tier of GPT-4o. This democratization of "ground truth" is essential for preventing a monopoly on high-quality agentic performance.

As the industry moves toward autonomous software engineering, tools like Context Hub will likely become foundational. By standardizing how documentation is served to and improved by AI, the project lays the groundwork for more reliable, cost-effective, and truly autonomous agentic workflows. The project is currently available on GitHub, and the team at DeepLearning.AI has invited the global developer community to contribute to the growing registry of documentation and workarounds.