The Google DeepMind research team has officially announced the release of Gemini Robotics-ER 1.6, a sophisticated upgrade to its embodied reasoning model designed to function as the cognitive foundation for autonomous robots. This latest iteration represents a significant leap in the field of Physical AI, focusing on the high-level "brain" functions required for robots to navigate, interpret, and interact with complex real-world environments. Unlike previous models that focused primarily on movement, Gemini Robotics-ER 1.6 specializes in spatial logic, long-horizon task planning, and success detection, enabling a new level of autonomy in robotic systems.

By serving as a high-level reasoning agent, the model can natively call upon a suite of tools, including Google Search, vision-language-action (VLA) models, and various third-party functions, to execute multi-stage tasks. This development marks a transition from robots that simply follow pre-programmed paths to robots that can "think" through a problem and adjust their behavior based on visual and logical feedback.

The Dual-Model Framework: Strategist vs. Executor

To understand the impact of Gemini Robotics-ER 1.6, it is essential to examine Google DeepMind’s dual-model architecture for robotics. The company employs a bifurcated system where two distinct models work in tandem to manage physical tasks.

Gemini Robotics 1.5 serves as the Vision-Language-Action (VLA) model. This is the "executor" of the system, responsible for processing immediate visual inputs and user prompts to generate direct physical motor commands. It handles the low-level mechanics of movement, such as how much torque to apply to a robotic arm or how to adjust a grip in real-time.

In contrast, Gemini Robotics-ER 1.6 acts as the "embodied reasoning" model, or the "strategist." It does not directly control the robot’s limbs; instead, it analyzes the environment, understands the physical layout, plans the sequence of necessary actions, and provides high-level insights to the VLA model. For example, if a user asks a robot to "clean the kitchen," the ER 1.6 model identifies which objects are out of place, determines the order in which they should be moved, and monitors whether each sub-task was completed successfully. This division of labor allows for more complex reasoning without overloading the low-level control systems.

Evolution and Chronology of Google’s Robotics AI

The release of Gemini Robotics-ER 1.6 is the latest milestone in a rapidly accelerating timeline of AI development at Google DeepMind. The journey toward embodied reasoning began with early experiments in Robotics Transformers (RT-1 and RT-2), which sought to apply large language model (LLM) logic to physical actions.

In early 2024, the introduction of Gemini 1.5 Pro and Flash provided the foundational multimodal capabilities necessary for robots to "see" and "read" their surroundings. By mid-2024, Gemini Robotics-ER 1.5 was deployed, establishing the initial framework for the strategist-executor relationship. However, ER 1.5 faced limitations in fine-grained spatial reasoning and lacked the ability to interpret complex industrial instruments.

The transition to version 1.6, announced in April 2026, incorporates "agentic vision"—a capability that combines visual reasoning with iterative code execution. This allows the model to not only look at an image but to "zoom in," calculate proportions, and run internal scripts to verify its observations. This chronological progression highlights a shift from general multimodal understanding to specialized, high-precision physical reasoning.

Precision Pointing and Spatial Logic

One of the most critical enhancements in Gemini Robotics-ER 1.6 is its improved "pointing" capability. While pointing might seem like a simple task, in the context of robotics, it is the foundation for all spatial reasoning. The model can now identify precise pixel-level locations in an image, which facilitates several advanced functions:

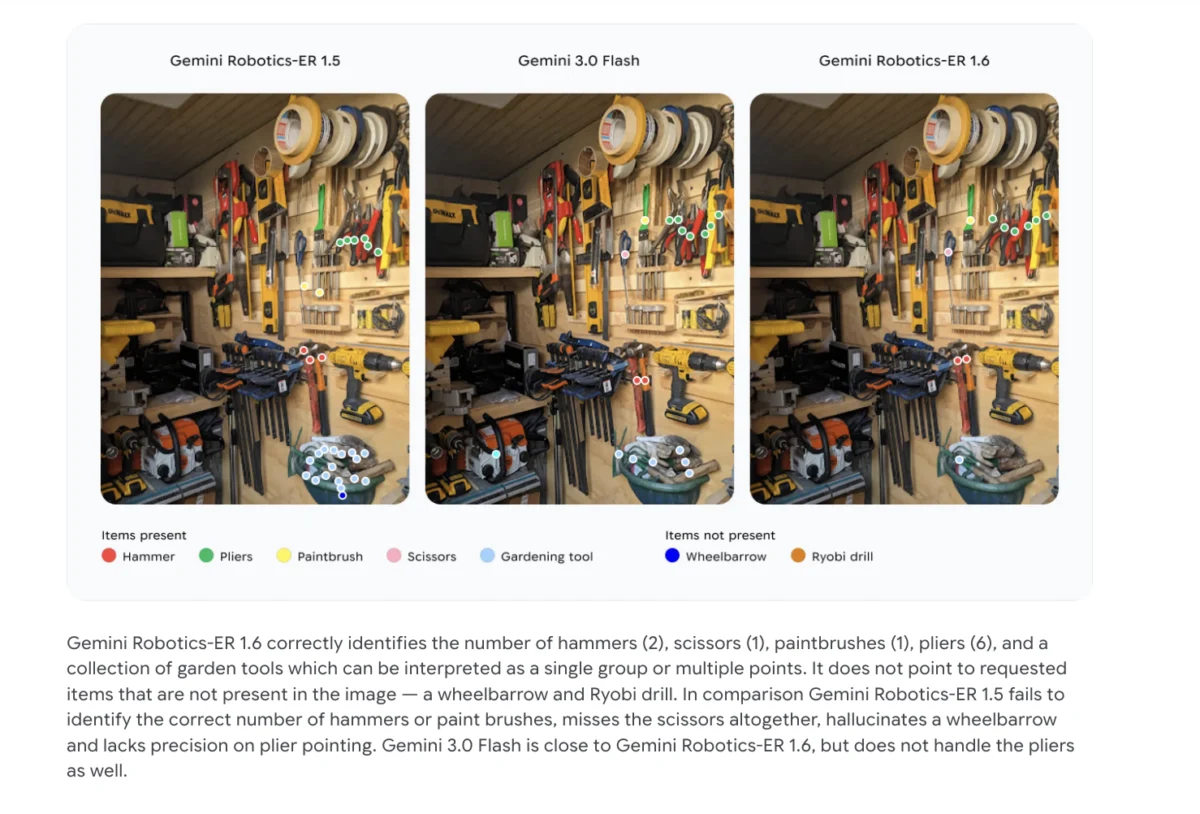

- Precision Object Detection and Counting: The model can distinguish between similar objects in a cluttered environment. In internal benchmarks, ER 1.6 successfully identified the exact number of hammers, scissors, and pliers in a scene, whereas version 1.5 frequently miscounted or missed objects entirely.

- Relational Logic: The model can understand comparisons, such as identifying the "smallest" item in a group or understanding "from-to" relationships, which are essential for tasks like "move the smallest cup to the top shelf."

- Constraint Compliance: ER 1.6 can process complex conditional prompts, such as "point to every object that is small enough to fit inside this blue container." This requires an understanding of volume and physical constraints that goes beyond simple image recognition.

- Motion Trajectory Mapping: By identifying optimal grasp points and potential paths of movement, the model helps the VLA executor avoid obstacles and handle fragile objects with greater care.

For AI professionals, these improvements are vital because they drastically reduce "hallucinations." In robotics, a hallucination occurs when a model "sees" an object that is not there. If a robot attempts to grab a non-existent tool, it can lead to mechanical strain or safety hazards. ER 1.6’s ability to correctly identify what is not present is as important as its ability to identify what is.

Success Detection and Multi-View Reasoning

A recurring challenge in robotics is "success detection"—the ability of a robot to know when it has actually finished a task or if it has failed and needs to try again. Gemini Robotics-ER 1.6 introduces a more robust decision-making engine for this purpose.

Modern robotic setups often utilize multiple camera feeds, such as a stationary overhead camera and a camera mounted on the robot’s wrist. ER 1.6 excels at "multi-view reasoning," which involves fusing these different visual streams into a single, coherent understanding of the environment. This is particularly useful in "occluded" scenarios where an object might be hidden from the overhead camera but visible to the wrist camera. By synthesizing these views, the model can confirm whether a gripper has successfully closed around an object or if a part has been correctly seated in an assembly line.

Industrial Breakthrough: The Instrument Reading Capability

The most significant "zero-to-one" feature in Gemini Robotics-ER 1.6 is its ability to read and interpret industrial instruments. This capability was developed in response to real-world needs in facility inspection, a sector where Google DeepMind has collaborated with Boston Dynamics.

In many industrial environments, critical data is still displayed on analog gauges, pressure meters, and sight glasses. Replacing these with digital sensors is often prohibitively expensive or technically impossible. Consequently, there is a massive demand for mobile robots, such as Boston Dynamics’ Spot, to patrol facilities and read these instruments manually.

Instrument reading is a remarkably complex task for AI. It requires:

- Needle and Tick Mark Interpretation: Identifying the exact position of a needle relative to logarithmic or linear scales.

- Distortion Correction: Accounting for the angle of the camera and the curvature of the glass (parallax error).

- Multi-Dial Integration: Some gauges have multiple needles representing different decimal places (e.g., utility meters), which must be combined into a single numerical value.

- Unit Context: Reading the small text on a gauge to determine if the measurement is in PSI, Bar, or Celsius.

Performance Benchmarks and Data Analysis

The technical superiority of Gemini Robotics-ER 1.6 is reflected in its performance data, particularly when compared to its predecessors and the Gemini 3.0 Flash model. In the specific task of instrument reading, the success rates show a clear upward trajectory:

- Gemini Robotics-ER 1.5: 23% success rate (evaluated without agentic vision).

- Gemini 3.0 Flash: 67% success rate (with agentic vision).

- Gemini Robotics-ER 1.6: 86% success rate.

- Gemini Robotics-ER 1.6 (with Agentic Vision): 93% success rate.

The jump from 23% to 93% is not merely a refinement of existing code but a fundamental shift in architecture. By using agentic vision, the 1.6 model can execute a multi-step "look-and-verify" loop. It first captures a wide shot, identifies the gauge, zooms in for a high-resolution crop, uses pointing to mark the needle and scale, and then runs a code script to calculate the precise reading based on geometric proportions.

Industry Implications and Broader Impact

The advancement of Gemini Robotics-ER 1.6 has profound implications for the future of automated labor and industrial safety. By providing a "cognitive brain" that can be integrated into various robotic forms—from humanoid executors to quadrupeds like Spot—Google DeepMind is lowering the barrier to entry for autonomous facility management.

Industry experts suggest that this technology will first see mass adoption in "dangerous, dull, or dirty" (the three Ds) jobs. For instance, in oil and gas refineries or nuclear power plants, robots equipped with ER 1.6 can perform routine inspections of analog equipment without exposing human workers to hazardous conditions.

Furthermore, the model’s ability to understand "relational logic" and "constraint compliance" suggests a future where robots can be deployed in unstructured environments like warehouses or hospitals. In these settings, the ability to reason through a problem—such as "find a way to move this cart through a crowded hallway"—is more valuable than the ability to follow a rigid, pre-mapped path.

Conclusion

Gemini Robotics-ER 1.6 represents a landmark achievement in the quest for General Purpose Robotics. By separating high-level reasoning from low-level execution, Google DeepMind has created a system that is both flexible and precise. The introduction of instrument reading and enhanced spatial pointing addresses some of the most persistent bottlenecks in robotic autonomy.

As this model continues to be integrated into commercial robotics platforms, the focus will likely shift toward "long-horizon" tasks—actions that take minutes or hours rather than seconds. With the strategist (ER 1.6) and the executor (VLA 1.5) working in harmony, the gap between digital intelligence and physical capability is narrower than ever before. The ability for a robot to not just see, but to understand and reason about the physical world, marks the beginning of a new era in industrial and domestic automation.