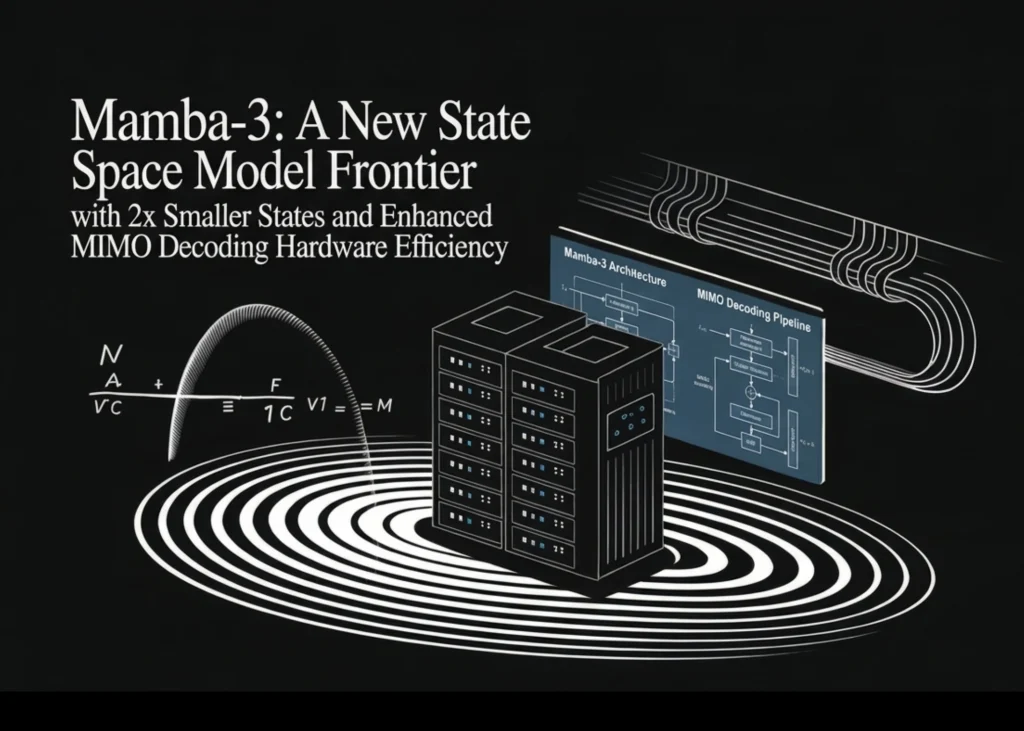

The landscape of generative artificial intelligence is undergoing a fundamental shift as the industry moves from a focus on training-scale dominance to the optimization of inference-time compute. As Large Language Models (LLMs) are integrated into real-time applications, the limitations of the traditional Transformer architecture—specifically its quadratic computational complexity and linear memory growth—have become significant hurdles for large-scale deployment. To address these bottlenecks, a collaborative team of researchers from Carnegie Mellon University (CMU), Princeton University, Together AI, and Cartesia AI has introduced Mamba-3. This new iteration of the State Space Model (SSM) framework adopts an "inference-first" philosophy, aiming to bridge the performance gap between sub-quadratic models and traditional Transformers while maintaining superior hardware efficiency.

The Evolution of State Space Models

To understand the significance of Mamba-3, it is necessary to examine the trajectory of sequence modeling over the last three years. For nearly a decade, the Transformer architecture, powered by the Attention mechanism, has been the undisputed standard for natural language processing. However, Attention requires every token in a sequence to look back at every previous token, leading to a computational cost that grows quadratically ($O(N^2)$) as sequence lengths increase.

In response, researchers began exploring State Space Models (SSMs), which originate from control theory. SSMs process information through a hidden state that is updated linearly, offering a theoretical complexity of $O(N)$. The journey began with the Structured State Space (S4) model, which proved that SSMs could handle long-range dependencies. This was followed by Mamba-1, which introduced "selectivity," allowing the model to choose which information to remember or discard based on the input. Mamba-2 further refined this by introducing "State Space Duality," linking SSMs to linear attention and improving training efficiency on modern GPUs.

Despite these advances, Mamba-1 and Mamba-2 still faced "modeling gaps" where they underperformed Transformers on certain logic-heavy tasks or required external convolutional layers to maintain stability. Mamba-3 represents a comprehensive overhaul intended to eliminate these remaining weaknesses through three primary methodological updates: exponential-trapezoidal discretization, complex-valued state updates, and a Multi-Input Multi-Output (MIMO) formulation.

Refining Temporal Dynamics: Exponential-Trapezoidal Discretization

At the core of any SSM is the transition from a continuous-time differential equation to a discrete-time recurrence that a computer can process. This process is known as discretization. Previous iterations of the Mamba architecture relied on "exponential-Euler" discretization, a first-order approximation. While computationally simple, first-order approximations can introduce errors in how the model perceives the flow of information over time, especially in high-frequency data.

Mamba-3 introduces exponential-trapezoidal discretization. This is a second-order accurate approximation of the state-input integral. In practical terms, this update transitions the model from a two-term update to a three-term recurrence. The mathematical shift allows the model to better capture the nuances of the input signal by considering both the current and previous states more holistically.

Technically, this update manifests as an implicit data-dependent convolution with a width of two. By embedding this convolutional behavior directly into the core recurrence, the researchers found they could remove the external "short causal convolutions" that were previously a staple of recurrent architectures. This simplification reduces the architectural footprint while enhancing the model’s ability to handle local dependencies, leading to smoother training dynamics and better convergence.

Overcoming Logical Constraints with Complex States and the RoPE Trick

One of the most persistent criticisms of real-valued linear models is their inability to solve specific synthetic logic tasks, such as the "Parity" problem (determining if a sequence of bits contains an even or odd number of ones). This failure is not a matter of model size, but of mathematical representation. Real-valued transition matrices are restricted to real eigenvalues, which means they cannot effectively represent "rotational" dynamics. In many logical and mathematical tasks, the model must essentially "rotate" its internal state to track cycles; real numbers simply oscillate or decay, but they do not rotate.

To solve this, Mamba-3 incorporates complex-valued SSMs. By allowing the state to exist in the complex plane, the model gains the ability to represent periodic and rotational patterns. However, computing complex-valued updates can be computationally expensive on standard hardware. To mitigate this, the research team developed what they call the "RoPE Trick."

The team established a theoretical equivalence between complex-valued SSMs and real-valued SSMs that utilize data-dependent Rotary Positional Embeddings (RoPE) on the input and output projections. By applying aggregated, data-dependent rotations across time steps, Mamba-3 can solve Parity and Modular Arithmetic tasks—areas where Mamba-2 and other real-valued variants performed no better than random guessing. This breakthrough suggests that Mamba-3 is the first sub-quadratic model to truly match the logical reasoning capabilities inherent in the Attention mechanism.

Enhancing Hardware Utilization: The MIMO Formulation

The third major innovation in Mamba-3 addresses the "memory-wall" problem in modern AI hardware. While SSMs are theoretically faster than Transformers during inference, they often suffer from low "arithmetic intensity." In standard Single-Input Single-Output (SISO) decoding, the ratio of floating-point operations (FLOPs) to memory bytes moved is low. This means that a high-end GPU like the NVIDIA H100 spends most of its time waiting for data to move from memory to the processor, rather than actually performing calculations.

Mamba-3 introduces a Multi-Input Multi-Output (MIMO) formulation to increase the work done per byte of memory moved. By increasing the rank ($R$) of the input and output projections, the state update is transformed from a simple outer product into a more intensive matrix-matrix multiplication.

The results of this shift are significant. At a fixed state size, the MIMO formulation increases decoding FLOPs by up to four times compared to Mamba-2. Because this additional computation is "overlaid" with the existing memory I/O required for the state update, the model achieves better quality and lower perplexity without increasing the actual wall-clock latency during inference. This makes Mamba-3 exceptionally efficient for deployment on high-bandwidth memory (HBM) systems, as it maximizes the utility of the hardware’s compute units.

Architectural Refinements and Normalization

Beyond the mathematical core, Mamba-3 adopts several architectural refinements to ensure stability at scale. The model follows a "Llama-style" layout, which has become the industry standard for LLMs, alternating Mamba blocks with SwiGLU (Swish-Gated Linear Unit) blocks.

One notable change is the shift from RMSNorm (Root Mean Square Normalization) to standard LayerNorm. The researchers observed that as the model scales, LayerNorm provides better numerical stability for the complex state updates. Additionally, the model utilizes learnable biases for the $B$ and $C$ projections, further enhancing its ability to adapt to diverse data distributions. These refinements contribute to a model that is not only faster but also more robust during the pre-training phase.

Empirical Performance and Benchmark Results

The research team evaluated Mamba-3 using the FineWeb-Edu dataset, a high-quality corpus designed to test the educational and reasoning depth of language models. Testing was conducted across four model scales, ranging from 180 million to 1.5 billion parameters.

The results demonstrate a clear hierarchy of performance. In comparisons of 1.5B parameter models, the traditional Transformer achieved an average downstream accuracy of 55.4% and a perplexity of 10.51. Mamba-2 showed a slight improvement with 55.7% accuracy. However, Mamba-3 represented a significant leap forward. The SISO version of Mamba-3 reached 56.4% accuracy, while the Mamba-3 MIMO version (with a rank of $R=4$) achieved 57.6% accuracy and a perplexity of 10.24.

| Model (1.5B) | Avg. Downstream Acc % | FW-Edu Perplexity |

|---|---|---|

| Transformer | 55.4 | 10.51 |

| Mamba-2 | 55.7 | 10.47 |

| Mamba-3 SISO | 56.4 | 10.35 |

| Mamba-3 MIMO (R=4) | 57.6 | 10.24 |

These figures indicate that Mamba-3 is not just a faster alternative to the Transformer, but a more capable one. The reduction in perplexity suggests that the model has a better fundamental understanding of language patterns, while the boost in downstream accuracy confirms its utility in practical tasks.

Broader Implications for the AI Industry

The introduction of Mamba-3 comes at a pivotal moment for the AI industry. As companies race to build "agentic" systems that require long-context processing and rapid-fire reasoning, the cost of inference has become a primary concern. Transformers, while powerful, are expensive to run at the scale required for global consumer applications.

The success of Mamba-3 suggests that the "Transformer hegemony" may be facing its first serious challenge. By proving that sub-quadratic models can match or exceed Transformer performance on both logical benchmarks and standard language tasks, the researchers have opened a path toward more sustainable AI.

For hardware manufacturers, Mamba-3 provides a blueprint for models that can better utilize the massive compute power of modern AI chips. For software developers, it offers the potential for LLMs that can handle massive document sets or hours of video content without the exponential slowdown associated with current models.

As the project moves toward open-source availability on platforms like GitHub, the developer community will likely begin exploring how Mamba-3 scales beyond the 1.5B parameter mark. If the scaling laws observed in these initial tests hold true for 7B, 70B, or even larger models, Mamba-3 could fundamentally redefine the architectural standards of the next generation of artificial intelligence.