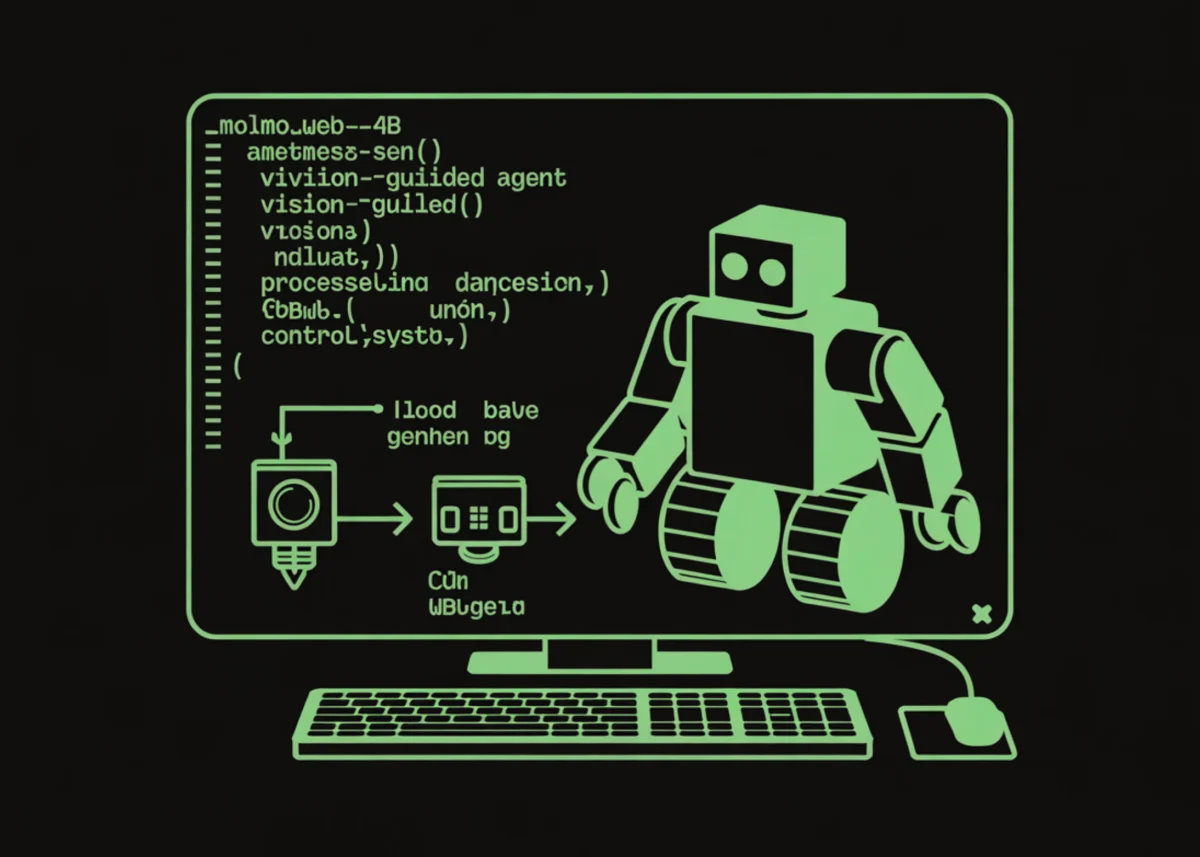

The Allen Institute for AI (Ai2) has officially introduced MolmoWeb, an open-source multimodal web agent designed to navigate and interact with websites using visual comprehension rather than traditional code-based parsing. Unlike conventional web automation tools that rely on Document Object Model (DOM) trees or HTML structures—which can be inconsistent, obfuscated, or overly complex—MolmoWeb interprets the web through direct screenshot analysis. This development marks a significant shift in the field of autonomous agents, moving toward a more human-like "vision-first" approach to digital interaction. By processing visual inputs and generating precise action sequences, MolmoWeb addresses long-standing challenges in web navigation, including dynamic content rendering and non-standard interface designs.

Technical Foundation and Vision-Based Navigation

The core innovation of MolmoWeb lies in its ability to understand the visual layout of a browser window. Most current web agents function by "reading" the underlying HTML of a page. While effective for simple sites, this method often fails when encountering modern web applications built with React, Vue, or complex CSS that hides elements from the standard DOM. MolmoWeb bypasses these limitations by treating the browser viewport as a visual canvas.

The model utilizes a multimodal architecture that combines vision encoders with large language model (LLM) reasoning capabilities. Specifically, the MolmoWeb-4B and MolmoWeb-8B variants are trained to recognize interactive elements—such as buttons, input fields, and links—directly from pixel data. When tasked with an objective, the model analyzes a screenshot, formulates a "thought" process regarding the next necessary step, and then outputs a specific "action" string. These actions include clicking specific normalized coordinates, typing text, scrolling, or navigating between tabs.

To facilitate deployment on accessible hardware, the model supports 4-bit NormalFloat (NF4) quantization. This allows the 4-billion parameter version to operate within approximately 6 GB of VRAM, making it compatible with consumer-grade GPUs and free-tier cloud environments like Google Colab. This democratization of high-performance web agents is expected to accelerate research and development in the open-source community.

The MolmoWebMix Dataset: Training the Next Generation of Agents

The efficacy of MolmoWeb is rooted in its extensive and diverse training regime. Ai2 has released the MolmoWebMix dataset, a massive collection of web interaction data designed to ground models in real-world browsing scenarios. The dataset is divided into three primary components:

- MolmoWeb-HumanTrajs: This subset contains approximately 30,000 human-recorded trajectories. These records capture how human users navigate the web to complete specific tasks, providing a gold standard for intuitive reasoning and efficient pathfinding.

- MolmoWeb-SyntheticTrajs: To scale the training data, Ai2 utilized "axtree" agents to generate synthetic trajectories. These automated paths help the model understand a wider variety of edge cases and website layouts that may not have been captured in the human-recorded set.

- MolmoWeb-SyntheticQA: This is the largest component, consisting of 2.2 million screenshot-based question-and-answer pairs. These pairs focus on visual grounding, teaching the model to identify exactly where a "Submit" button is located or which text box corresponds to a "Username" prompt.

This tiered approach to data collection ensures that MolmoWeb can generalize across different industries, from e-commerce and academic research to travel booking and corporate intranets.

Operational Workflow: The Thought-Action Paradigm

MolmoWeb operates on a structured loop that mimics human cognitive processes. The workflow typically begins with a user-defined goal, such as "Find the latest research paper on Molmo from the Ai2 website." The agent then follows a recursive process:

- Observation: The system captures a high-resolution screenshot of the current browser state.

- Contextualization: The model receives the goal, the history of previous actions taken, and the current URL/page title.

- Reasoning (Thought): The model generates a textual explanation of what it sees and what it needs to do next. For example: "I see a search bar in the top right; I should click it to enter my query."

- Execution (Action): The model outputs a command in a standardized action space, such as

click(0.85, 0.12)ortype("Molmo paper"). - Verification: After the action is executed via a browser automation tool like Playwright, the cycle repeats with a new screenshot until the task is marked as complete.

The action space defined by Ai2 includes goto(url), click(x, y), type(text), scroll(direction), press(key), new_tab(), switch_tab(n), go_back(), and send_msg(text). The send_msg function is particularly important as it serves as the terminal action, where the agent provides the final answer or confirmation to the user.

Performance Benchmarks and Industry Analysis

In comparative testing, MolmoWeb has demonstrated industry-leading performance for open-source models. The MolmoWeb-8B variant achieved a 78.2% success rate on the WebVoyager benchmark, a standard used to evaluate the proficiency of web agents in completing multi-step tasks. Furthermore, with the application of test-time scaling—a technique where the model generates multiple potential paths and selects the most promising one—the success rate rose to 94.7% (pass@4).

Analysts suggest that MolmoWeb’s vision-centric approach provides a significant advantage over text-only or DOM-reliant models. "The web is increasingly visual," noted one AI researcher following the release. "By training a model to ‘see’ the web, Ai2 is creating a tool that won’t break every time a developer updates their HTML tags or changes a class name in their CSS."

This robustness is critical for Enterprise Robotic Process Automation (RPA). Traditional RPA scripts are notoriously brittle, often requiring manual updates when a website’s layout undergoes even minor changes. MolmoWeb’s ability to re-evaluate the page visually at every step offers a "self-healing" capability that could drastically reduce maintenance costs for automated workflows.

Chronology of Development

The release of MolmoWeb follows a strategic timeline of multimodal research at the Allen Institute for AI:

- Late 2023: Ai2 begins intensive research into unified multimodal models, seeking to bridge the gap between image recognition and linguistic reasoning.

- Early 2024: The development of the Molmo family of models begins, focusing on efficiency and open-weights accessibility.

- Mid 2024: Data collection for MolmoWebMix commences, utilizing a combination of human crowdsourcing and sophisticated synthetic data generation.

- Late 2024: Preliminary versions of Molmo are tested on internal benchmarks, showing high aptitude for spatial reasoning.

- March 2025: Official release of MolmoWeb-4B and 8B, along with the full MolmoWebMix dataset and technical reports.

Broader Implications and Future Outlook

The launch of MolmoWeb carries implications that extend beyond simple web browsing. For accessibility, vision-based agents could serve as advanced intermediaries for users with visual impairments, navigating complex interfaces and summarizing visual data in real-time. In the realm of cybersecurity, such agents could be used to automate the testing of web applications for vulnerabilities, navigating through authentication flows and complex forms just as a human tester would.

However, the rise of autonomous web agents also prompts discussions regarding web ethics and "bot-friendly" internet architectures. As agents become more proficient at bypassing traditional barriers, website operators may need to reconsider how they manage automated traffic.

Ai2 has emphasized its commitment to open science by providing not just the model weights, but also the training data and the code required to run the agent loop. This transparency is intended to foster a collaborative environment where developers can refine the model’s accuracy and expand its utility.

In the coming months, the developer community is expected to integrate MolmoWeb into various third-party applications, ranging from personalized digital assistants to automated market research tools. The ability to run a sophisticated multimodal agent on a single GPU suggests that the era of ubiquitous, autonomous web navigation is rapidly approaching.

Summary of Key Features

- Vision-First Architecture: Eliminates the need for HTML/DOM parsing by using screenshot-based navigation.

- Normalized Coordinate System: Ensures consistent clicking and interaction across different screen resolutions and aspect ratios.

- Quantization Support: 4-bit NF4 configuration allows the model to run on hardware with as little as 6 GB of VRAM.

- Comprehensive Action Space: Supports multi-tab browsing, keyboard inputs, and scrolling.

- Open Dataset: The MolmoWebMix collection provides 2.2 million QA pairs and 30,000 human trajectories for community use.

- High Success Rates: Achieves nearly 95% success on WebVoyager with test-time scaling.

As the Allen Institute for AI continues to iterate on the Molmo architecture, the focus is expected to shift toward even smaller, faster models and improved long-term memory, allowing agents to remember user preferences and complex multi-session tasks across the vast and ever-changing landscape of the World Wide Web.