Moonshot AI researchers have unveiled a significant modification to the foundational Transformer architecture, proposing a new mechanism called Attention Residuals (AttnRes) to replace the industry-standard residual connections. Since the introduction of the original Transformer in 2017, residual connections—originally popularized by the ResNet architecture in computer vision—have remained one of the few components of the model that have gone largely unquestioned. While these connections are essential for stabilizing the training of very deep models, the research team at Moonshot AI argues that the standard implementation creates a structural bottleneck that limits the efficiency and representational power of modern Large Language Models (LLMs). By replacing fixed-weight additions with a dynamic, depth-wise attention mechanism, AttnRes allows layers to selectively aggregate information from the entire history of the network’s processing stream, leading to significant gains in both training efficiency and downstream task performance.

The Evolution and Limitations of Residual Connections

To understand the significance of the Moonshot AI proposal, it is necessary to examine the role of residual connections in deep learning history. Before the advent of residuals, training deep neural networks was notoriously difficult due to the vanishing gradient problem, where signals would dissipate as they moved backward through many layers. Residual connections solved this by creating "highways" for gradient flow, allowing each layer to learn a delta, or a "residual," that is added to the previous state. In the context of modern Transformers, specifically those using the PreNorm architecture, this means that the output of each block is added back into a running hidden state.

However, Moonshot AI researchers have identified a fundamental flaw in this "summation-based" approach. In a standard Transformer, every layer contributes to the hidden state with a fixed weight of 1.0. As a model grows deeper, the magnitude of this hidden state naturally increases, which effectively dilutes the contribution of any single new layer. This phenomenon, often referred to as "PreNorm dilution," means that as a model reaches 60, 80, or 100 layers, the relative impact of the final layers on the overall representation becomes progressively weaker. Furthermore, because the summation is irreversible, once information from an early layer is blended into the residual stream, later layers cannot easily isolate or recover that specific original representation. This lack of "selective access" forces the model to treat the entire prior history as a monolithic, compressed vector, rather than a library of features that can be queried as needed.

Technical Architecture of Attention Residuals

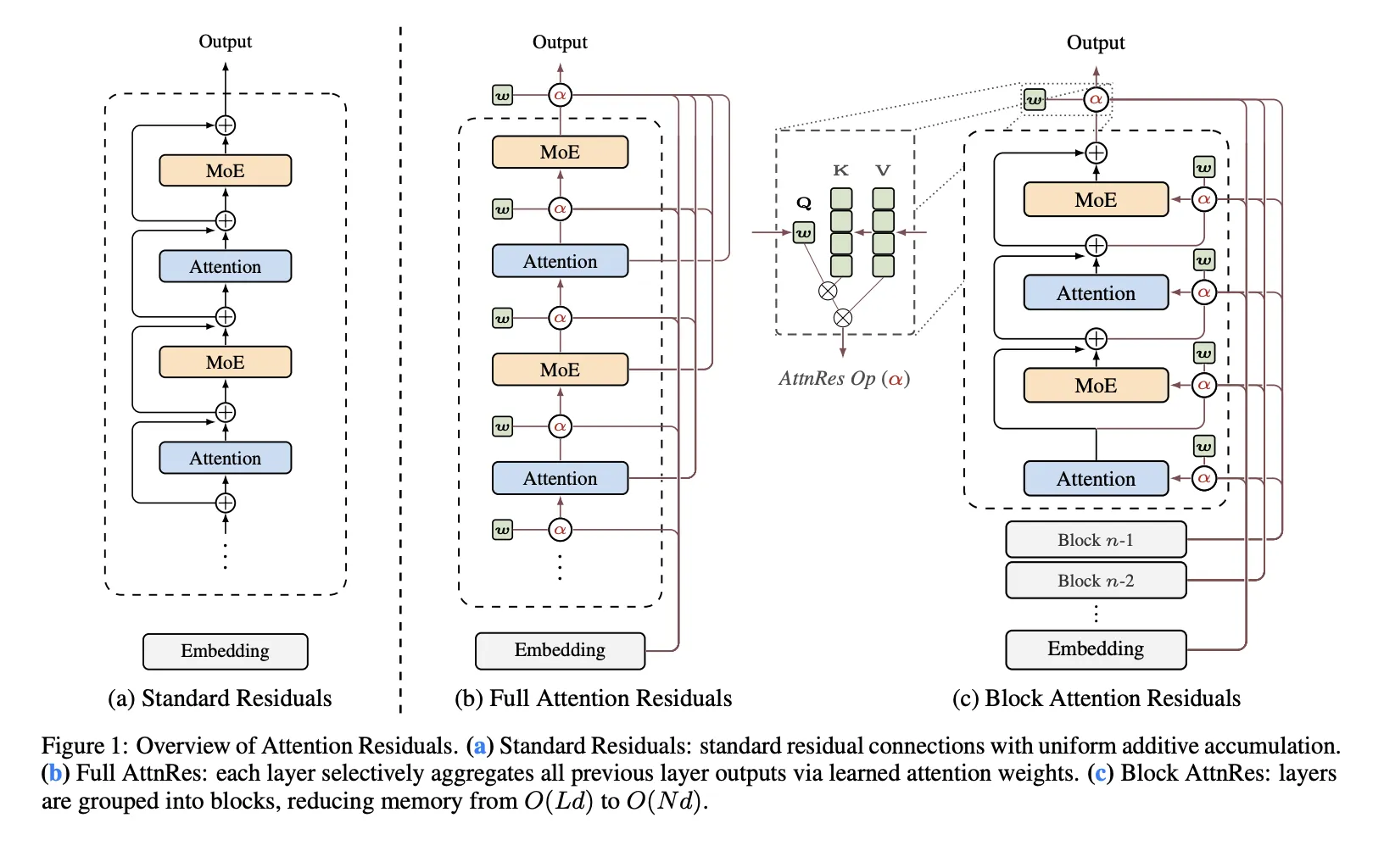

The core innovation of Attention Residuals is the application of the attention mechanism—traditionally used to model relationships between different tokens in a sequence—to the depth dimension of the network itself. In a standard Transformer, layer $l$ receives the sum of all previous outputs. In the AttnRes framework, layer $l$ treats the outputs of all preceding layers as a set of keys and values. It then uses a learned query to determine which specific prior representations are most relevant to the current stage of processing.

The implementation of "Full AttnRes" involves each layer computing attention weights over all previous depth positions. To maintain stability and prevent high-magnitude outputs from dominating the selection process, the researchers utilize RMSNorm on the previous layer outputs before they enter the attention calculation. A critical design choice in AttnRes is the use of a learned, layer-specific pseudo-query vector. Unlike standard self-attention, where queries are conditioned on the input token, these pseudo-queries are fixed parameters for each layer. This ensures that the "depth-wise" attention focuses on structural patterns of information flow across the architecture rather than token-specific noise. To ensure training stability from the outset, these pseudo-queries are initialized to zero, which causes the initial attention weights to be uniform. This effectively makes AttnRes behave like a simple averaging mechanism at the start of training, preventing the "exploding" or "vanishing" dynamics that can occur with uninitialized attention.

Overcoming Computational and Memory Overheads

While Full AttnRes offers the most theoretical flexibility, it introduces a computational challenge. In a standard Transformer, memory requirements for residual connections are linear relative to the depth ($O(Ld)$). However, because Full AttnRes requires attending to every previous layer, the arithmetic complexity scales quadratically with depth ($O(L^2d)$). For massive models with many layers, this could lead to significant slowdowns in training and increased memory pressure, especially when using techniques like activation re-computation or pipeline parallelism.

To address these practical constraints, Moonshot AI developed "Block AttnRes." This variant partitions the network’s layers into $N$ distinct blocks. Within each block, the outputs are summarized into a single representation. The attention mechanism then only needs to operate over these $N$ block-level representations plus the initial token embedding. This optimization reduces the memory and communication overhead from $O(Ld)$ to $O(Nd)$, where $N$ is typically much smaller than $L$ (for example, 8 blocks in a 60-layer model). The researchers reported that this block-based approach results in less than a 4% overhead during training under pipeline parallelism and less than a 2% increase in inference latency, making it a viable "drop-in" replacement for production-grade models.

Scaling Laws and Empirical Performance

The Moonshot AI team conducted extensive scaling law experiments to quantify the benefits of AttnRes across different model sizes and compute budgets. They compared three primary configurations: a standard PreNorm baseline, Full AttnRes, and the optimized Block AttnRes. The results indicated that AttnRes consistently achieves a lower validation loss than the baseline for the same amount of compute.

According to the fitted scaling laws provided in the research:

- Baseline Loss: $L = 1.891 times C^-0.057$

- Block AttnRes Loss: $L = 1.870 times C^-0.058$

- Full AttnRes Loss: $L = 1.865 times C^-0.057$

The practical implication of these formulas is that Block AttnRes can match the performance of a standard Transformer baseline while using approximately 20% to 25% less compute. In the highly competitive field of AI development, where training runs for frontier models can cost tens of millions of dollars, a 1.25x improvement in compute efficiency represents a massive economic and technical advantage.

Integration with Kimi Linear and Benchmark Results

The true test of any architectural innovation lies in its performance within a large-scale, real-world model. Moonshot AI integrated AttnRes into "Kimi Linear," their proprietary Mixture-of-Experts (MoE) architecture. Kimi Linear features 48 billion total parameters, with 3 billion parameters activated per token, and was pre-trained on a massive corpus of 1.4 trillion tokens.

The integration of AttnRes into Kimi Linear yielded substantial improvements across a wide array of standardized benchmarks, particularly in complex reasoning and mathematical tasks. The researchers observed that by keeping output magnitudes bounded and distributing gradients more uniformly across the depth of the 48B-parameter model, AttnRes allowed the MoE layers to specialize more effectively.

Specific benchmark gains included:

- MMLU (General Knowledge): Increased from 73.5 to 74.6

- GPQA-Diamond (Expert-level Science): Increased from 36.9 to 44.4

- Math (Mathematical Reasoning): Increased from 53.5 to 57.1

- HumanEval (Coding): Increased from 59.1 to 62.2

- C-Eval (Chinese Language Proficiency): Increased from 79.6 to 82.5

The nearly 8-point jump in GPQA-Diamond is particularly noteworthy, as this benchmark is designed to be difficult even for human experts and often serves as a proxy for a model’s "deep reasoning" capabilities. These results suggest that AttnRes is not merely a marginal optimization but a fundamental improvement in how models process and retain complex information.

Broader Implications for the AI Industry

The introduction of Attention Residuals by Moonshot AI signals a potential shift in how researchers view the "backbone" of the Transformer. For years, the industry has focused heavily on optimizing the attention mechanism across the sequence length (e.g., FlashAttention, Linear Attention, or Sliding Window Attention). Moonshot’s work redirects attention—quite literally—to the depth dimension.

This research has several long-term implications for the field:

- Deeper Architectures: By solving the "PreNorm dilution" and output growth problems, AttnRes may pave the way for stable training of models with hundreds or even thousands of layers, potentially unlocking new levels of emergent intelligence.

- Information Retrieval within Models: The "selective access" provided by AttnRes suggests that models can become better at "remembering" early processing steps. This could be crucial for tasks requiring multi-step logic where the model needs to refer back to its initial "intuitions" about a prompt after several layers of abstract processing.

- Efficiency Gains: The 1.25x compute efficiency gain provided by Block AttnRes offers a new lever for companies looking to reduce the environmental and financial costs of training LLMs without sacrificing performance.

As of the release of their findings, Moonshot AI has made the repository and technical paper available to the public, encouraging further exploration of depth-wise attention. While standard residual connections are unlikely to disappear overnight, the clear empirical advantages of AttnRes suggest that the "fixed-weight" era of neural network design may be coming to a close, replaced by dynamic, learned pathways that allow information to flow more intelligently through the layers of a machine’s mind.