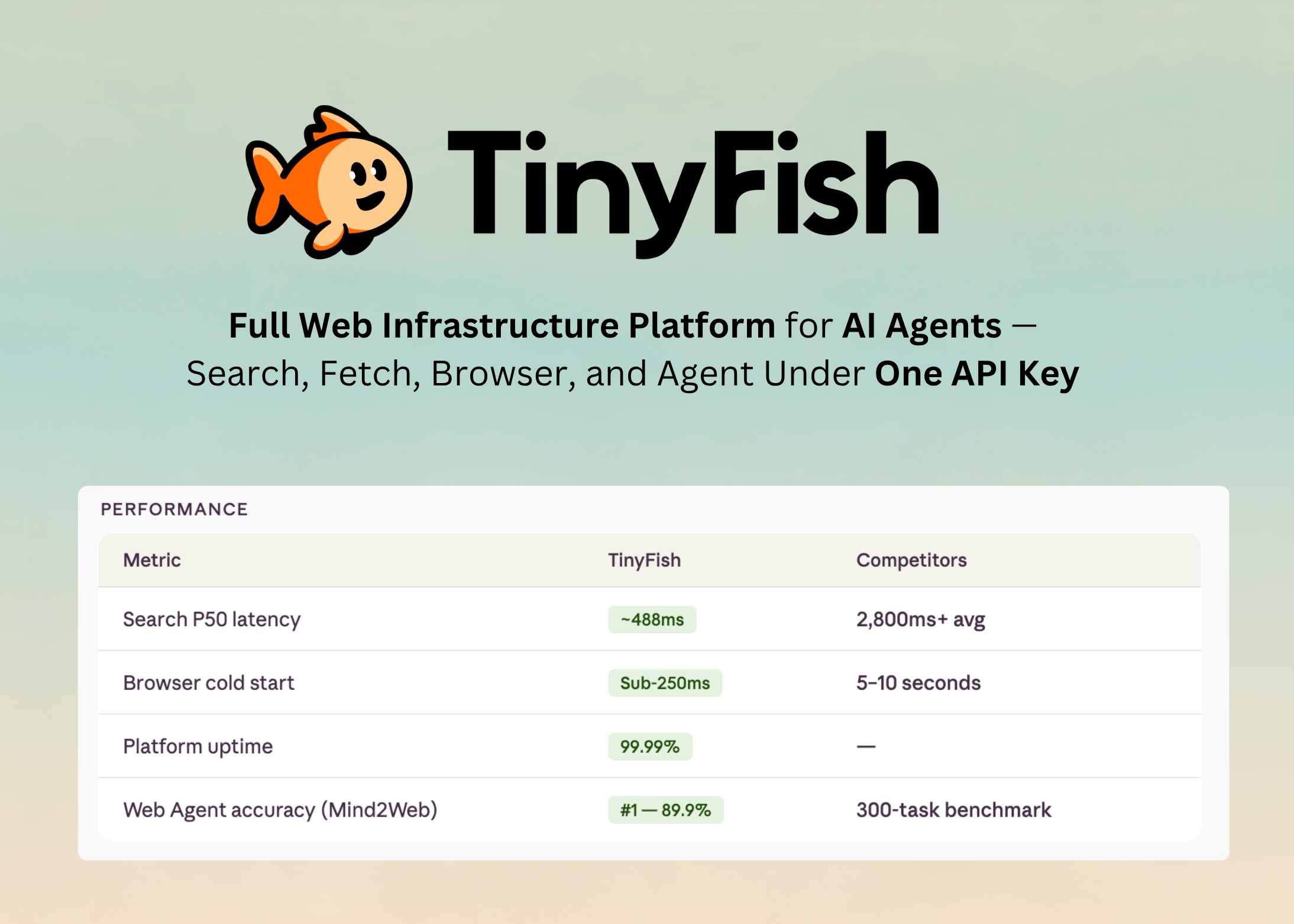

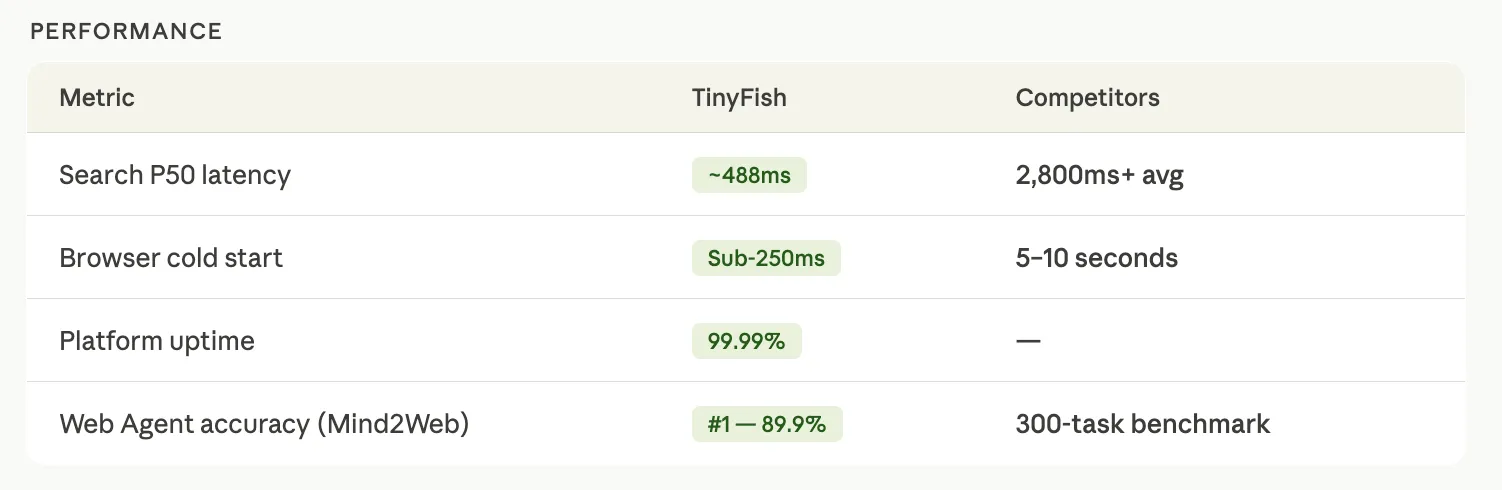

The Palo Alto-based startup TinyFish has officially unveiled a unified infrastructure platform designed to resolve the most persistent hurdles facing artificial intelligence agents operating on the live web. By consolidating web search, content retrieval, browser automation, and agentic reasoning under a single API key and credit system, the company aims to replace the currently fragmented landscape of AI tooling. This launch represents a strategic evolution for TinyFish, which previously gained recognition for its standalone web agent, as it pivots toward providing the underlying foundational layers necessary for developers to build reliable, autonomous workflows.

For the past two years, the industry has witnessed a surge in Large Language Models (LLMs) capable of sophisticated reasoning, yet these models frequently stumble when required to interact with the real-time internet. Tasks such as monitoring competitor pricing on JavaScript-heavy e-commerce sites, extracting structured financial data from dynamic dashboards, or navigating multi-step authentication flows have remained notoriously difficult to automate. Developers have traditionally been forced to stitch together disparate services—one for proxy-managed search, another for headless browser orchestration, and a third for HTML-to-markdown conversion—leading to fragile "glue code," inconsistent session handling, and prohibitive token costs.

The Architecture of the Unified Web Stack

The TinyFish infrastructure platform is composed of four primary products, each engineered to function independently or as part of an integrated pipeline. By owning the entire stack in-house, TinyFish claims to offer a level of reliability and visibility that is unattainable when relying on third-party API aggregators.

The first component, Web Search, provides an AI-optimized search engine that identifies relevant URLs without the overhead of traditional SEO-bloated results. Unlike competitors who may wrap third-party search APIs, TinyFish’s search layer is built to feed directly into agentic reasoning engines. The second component, Web Fetch, utilizes a full browser environment to render pages, including those reliant on complex client-side scripts, and returns cleaned content in either Markdown or JSON formats.

The third pillar, Web Browser, offers direct access to a managed browser environment, allowing agents to perform clicks, form fills, and navigation across complex domains. Finally, the Web Agent component serves as the high-level orchestration layer, capable of interpreting complex natural language instructions and executing them across the other three tools. This unified approach ensures "session consistency," where the same IP address, browser fingerprint, and cookies are maintained across a multi-step task, significantly reducing the likelihood of detection by anti-bot systems or session timeouts.

Addressing the Crisis of Token Pollution

A central technical achievement of the new platform is its solution to what developers call "context window pollution." When a standard AI agent attempts to read a webpage, it often ingests thousands of tokens worth of irrelevant data, including navigation menus, advertisement tracking code, CSS headers, and boilerplate legal text. For an LLM, this noise is not just expensive; it is a performance killer. Excessive irrelevant data consumes the model’s limited context window and can distract the attention mechanism, leading to hallucinations or a failure to find the specific data point requested.

TinyFish Fetch mitigates this by performing an intelligent "clean" of the page content during the rendering phase. According to internal benchmarks released by the company, operations conducted via the TinyFish Command Line Interface (CLI) utilize approximately 100 tokens per operation. In contrast, similar workflows routed through the Model Context Protocol (MCP)—a popular open standard for connecting AI models to data sources—often consume upwards of 1,500 tokens for the same task. This represents an 87% reduction in token consumption per operation. For enterprise-scale deployments where agents may perform thousands of fetches daily, this efficiency translates directly into massive cost savings and improved model accuracy.

The Shift from MCP to CLI-Driven Execution

While TinyFish continues to support the Model Context Protocol for discovery-based tasks, the company’s leadership has made a clear architectural argument in favor of a CLI-plus-Skills approach for heavy-duty execution. The primary distinction lies in how data is handled. In a standard MCP setup, the output of a web fetch is returned directly into the agent’s context window. This quickly fills the model’s memory during multi-step tasks.

The TinyFish CLI takes a different approach by writing output directly to the local filesystem. The agent is then instructed to read only the specific parts of the file it needs to proceed. This methodology mimics the traditional Unix philosophy of "pipes and redirects," allowing for a level of composability that sequential MCP round-trips cannot match. By keeping the context window "clean," TinyFish reports that its platform achieves a task completion rate that is twice as high as MCP-based execution on complex, multi-stage web workflows.

Empowering Coding Agents with the Skill System

To facilitate adoption, TinyFish is shipping a developer-centric "Skill" system. This involves a standardized markdown instruction file (SKILL.md) that effectively "teaches" popular AI coding assistants—such as Claude Code, Cursor, Codex, and OpenClaw—how to utilize the TinyFish CLI.

Once a developer adds the TinyFish skill to their environment, the agent gains the autonomous ability to recognize when a task requires web access. For example, if a developer asks a coding agent to "update our internal database with the latest documentation from the React and Next.js websites," the agent will independently identify the need for the TinyFish CLI, execute the necessary fetch and search commands, and write the structured data to the project directory. This removes the need for the human developer to write manual integration code or manage SDK configurations for every new web-based task.

Market Context and Competitive Landscape

The launch of the TinyFish platform comes at a time when the "Agentic Web" is becoming a primary focus for Silicon Valley. The industry is moving away from chatbots that simply talk and toward agents that "do." However, the infrastructure for "doing" has lagged behind the models themselves.

TinyFish enters a competitive arena where players like Browserbase and Firecrawl have already established footprints. However, TinyFish distinguishes itself through its vertical integration. Browserbase, for instance, utilizes Exa to power its search capabilities, creating a dependency on an external provider. Firecrawl offers a suite of crawling and search tools but has faced criticism regarding the reliability of its agent endpoints on high-complexity tasks.

TinyFish’s argument is that by owning the search, fetch, and browser layers, they can provide an end-to-end signal. If a task fails, the system knows exactly where the breakdown occurred—whether the search failed to find the page, the fetch failed to render the JavaScript, or the agent failed to parse the content. This level of diagnostic transparency is critical for enterprise clients who require high reliability and clear audit trails for autonomous operations.

Chronology of Development and Future Outlook

The trajectory of TinyFish reflects the rapid maturation of the AI sector.

- Early 2024: TinyFish launched as a standalone web agent, focusing on providing a user-friendly interface for simple web automation.

- Mid 2024: The company identified that the primary bottleneck for their users wasn’t the agent’s reasoning, but the quality of the data being retrieved from the web.

- Late 2024: Development began on the unified infrastructure stack, prioritizing token efficiency and session consistency.

- April 2026: TinyFish officially transitions from a product company to an infrastructure company with the launch of the unified API.

Industry analysts suggest that this move toward "clean" web infrastructure is a prerequisite for the next generation of AI. As LLMs become more commoditized, the competitive advantage for AI companies will shift toward the quality of the data pipelines they can access. By offering an 87% reduction in token waste and a 2x increase in task completion, TinyFish is positioning itself as a vital utility for the burgeoning agent economy.

The broader implications of this technology extend into automated research, real-time market intelligence, and the automation of legacy enterprise workflows that lack modern APIs. As agents become more capable of navigating the web as humans do—but at machine speed and scale—the need for a robust, unified, and efficient infrastructure layer like TinyFish becomes undeniable.

TinyFish is currently offering 500 free steps to new users to encourage exploration of the platform. The company has also released an open-source "cookbook" and a repository of Skill files on GitHub, providing a blueprint for developers to integrate autonomous web capabilities into their own applications. With the CLI documentation now public, the company is inviting the developer community to stress-test the limits of what a truly unified web agent infrastructure can achieve.