The rapid evolution of the artificial intelligence ecosystem has shifted the industry’s focus from static large language models (LLMs) to autonomous AI agents capable of interacting with the physical and digital world. As organizations seek to move beyond simple chat interfaces, the technical challenge of how an agent should access external data and execute specialized tasks has become a central point of innovation. Two primary methodologies have emerged as frontrunners in this space: the Model Context Protocol (MCP) and AI Agent Skills. While both aim to bridge the gap between model reasoning and external execution, they represent fundamentally different architectural philosophies. One prioritizes standardized, server-side interoperability, while the other focuses on lightweight, locally defined behavioral guidance.

The Architecture of Integration: Understanding MCP

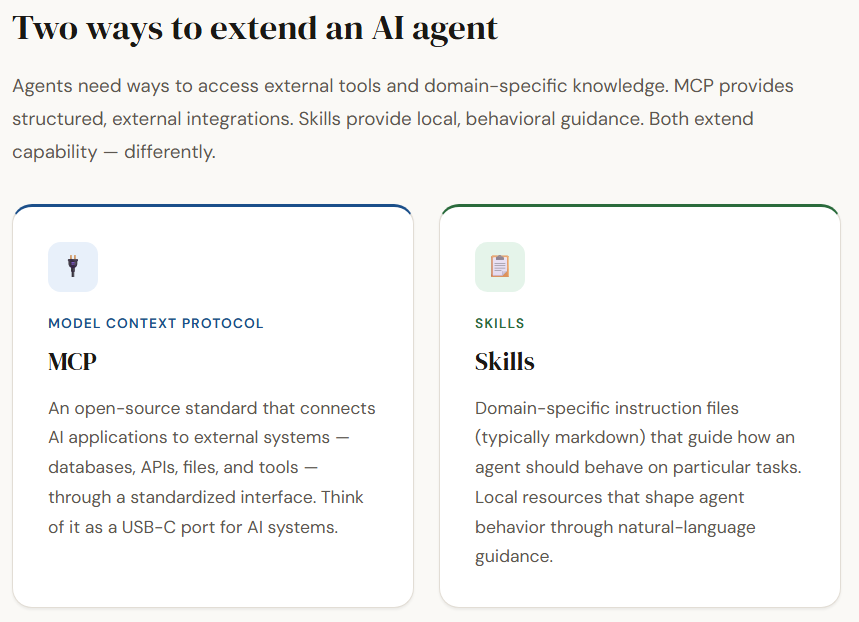

The Model Context Protocol (MCP) is an open-source standard designed to solve the fragmentation of AI tool integration. Introduced by Anthropic in late 2024, it functions as a universal interface between AI applications and external data sources. The industry often compares MCP to a "USB-C port" for AI; just as a standardized physical port allows diverse peripherals to connect to a computer without custom drivers for every device, MCP allows an LLM to connect to databases, local file systems, APIs, and specialized web tools through a single, unified protocol.

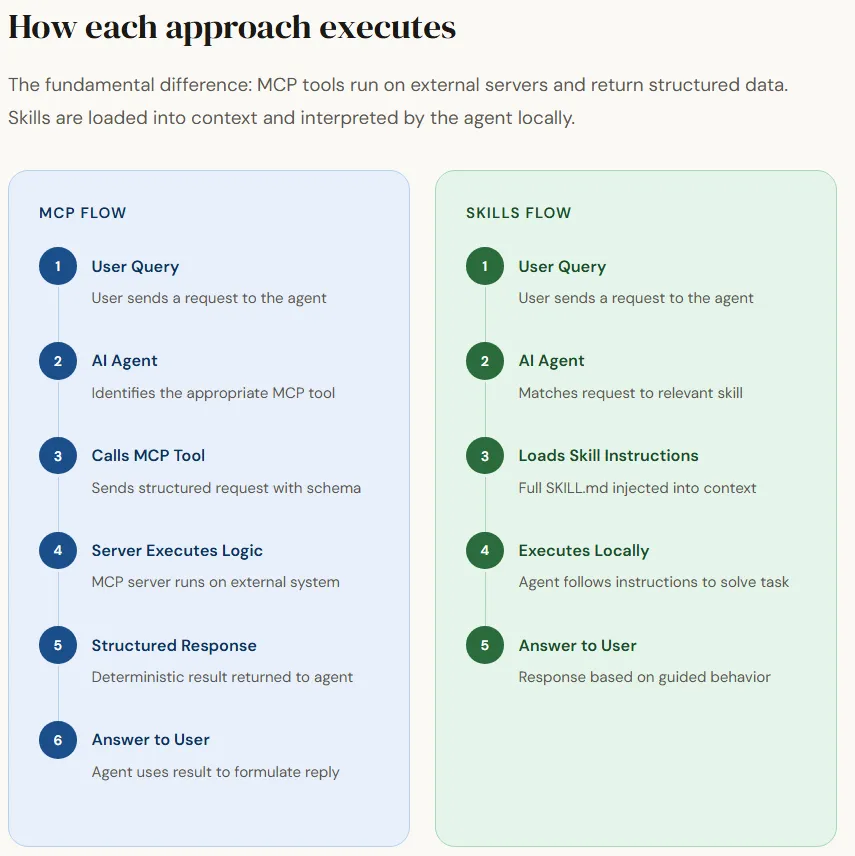

Technically, MCP operates through a client-server architecture. An MCP server hosts specific tools or resources—such as a SQL database connector or a web scraper—and exposes them to an AI agent (the client). When a user issues a query, the agent identifies the necessary tool, sends a request to the MCP server via JSON-RPC, and receives a structured response. This approach ensures that the agent interacts with external systems in a highly predictable and deterministic manner. For enterprise environments, this predictability is critical, as it allows for rigorous testing, security auditing, and the enforcement of authentication protocols at the server level.

The Behavioral Layer: Defining AI Agent Skills

In contrast to the infrastructure-heavy approach of MCP, "Skills" represent a behavioral layer designed for agility and ease of use. Skills are essentially domain-specific instruction sets that guide an agent on how to handle particular workflows. Rather than relying on an external server to execute logic, a skill is typically a local resource—often a directory containing Markdown files and occasionally auxiliary scripts—that provides the agent with the "know-how" to perform a task.

When an agent encounters a request that matches a skill’s description, it "loads" that skill into its active context window. This injection of instructions effectively re-programs the agent’s behavior for the duration of that task. For example, a "Python Coding Style" skill might contain specific linting rules, architectural preferences, and documentation standards. Because skills are stored locally and written in natural language, they are highly accessible to non-developers and can be modified instantly without restarting servers or updating API schemas.

Chronology of Development in Agentic Tooling

The transition from simple prompting to the current dichotomy of MCP and Skills follows a clear chronological path in AI development.

- Late 2022 – Early 2023: Zero-Shot Prompting. Early users relied entirely on the model’s pre-trained knowledge. If a model did not know a fact or lacked a tool, the user had to manually copy-paste data into the prompt.

- Mid 2023: Function Calling and Plugins. Platforms like OpenAI introduced "Function Calling," allowing developers to describe functions to the model in JSON format. This was the first step toward structured tool use, but it remained proprietary and fragmented.

- Early 2024: Custom GPTs and Local Instruction Sets. The rise of "System Instructions" and "Custom GPTs" popularized the idea of behavioral guidance, leading to the formalized "Skills" folders seen in modern desktop agents.

- Late 2024: The Launch of MCP. Anthropic open-sourced the Model Context Protocol, aiming to move the industry away from proprietary "walled garden" integrations and toward a standardized, interoperable ecosystem.

- 2025 – Present: Hybrid Integration. The current era sees a convergence where sophisticated agents use MCP for heavy-duty data retrieval and Skills for nuanced task execution.

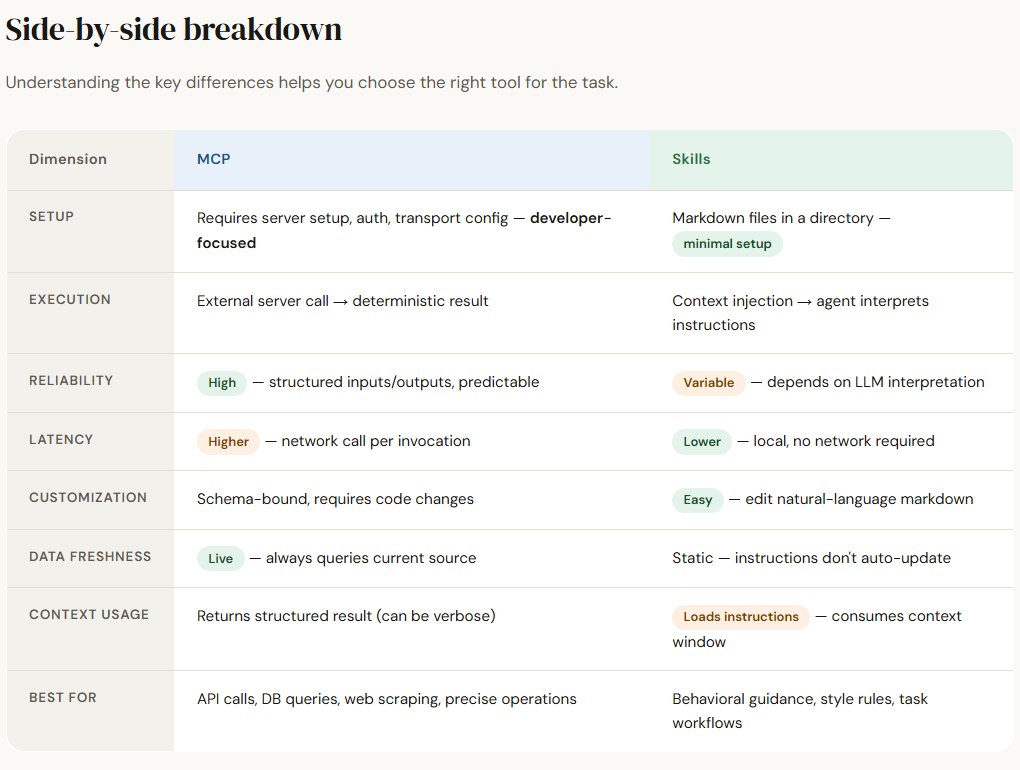

Technical Comparison and Supporting Data

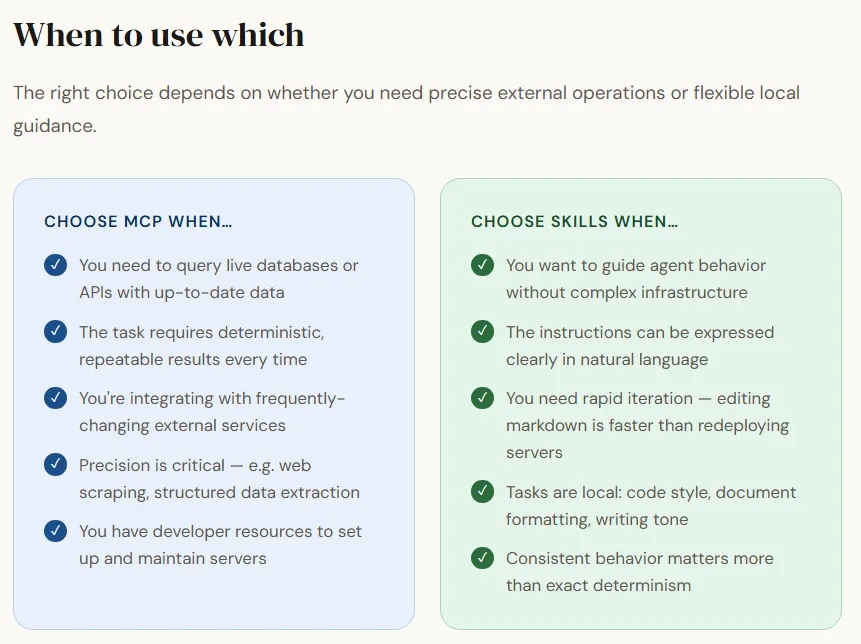

The choice between MCP and Skills involves significant trade-offs in latency, reasoning burden, and scalability.

Scalability and Tool Discovery

In an MCP environment, scalability is managed through server-side discovery layers. An agent can theoretically access hundreds of tools, but it only "knows" about them through metadata provided by the server. Recent benchmarks in agentic performance suggest that as the number of available tools increases, the "tool selection error rate" rises. MCP mitigates this by using highly structured input schemas, which reduces the likelihood of the model providing malformed arguments.

Skills, however, face a different scalability challenge: context bloat. Since skills are injected directly into the agent’s context window, having too many active skills can consume thousands of tokens, leaving less room for the actual conversation or the model’s reasoning process. Data from context-utilization studies indicates that models perform best when the "instruction-to-task" ratio is optimized, suggesting that Skills are best suited for a small number of high-impact behavioral guidelines rather than exhaustive toolkits.

Execution and Latency

MCP tools typically involve network overhead. Every time an agent calls an MCP tool, it must perform a round-trip communication with the server. In multi-step workflows—such as an agent researching a topic, writing code, and then testing it—these sequential network calls can introduce a latency of 500ms to several seconds per step.

Skills, being local, have near-zero latency for activation. The "cost" is instead shifted to the initial processing of the instructions. Once the instructions are in the context, the model executes them at its standard inference speed. This makes Skills significantly faster for tasks that require complex logic but do not necessarily need real-time data from a remote database.

Industry Reactions and Official Perspectives

The developer community has shown a divided but complementary interest in these technologies. Major AI labs, particularly Anthropic, have championed MCP as the future of the "Agentic Web." By open-sourcing the protocol, they have encouraged a community-driven repository of "MCP Servers," which now includes integrations for Google Drive, Slack, GitHub, and even local SQLite databases.

Enterprise security officers have expressed a preference for the MCP model because it allows for "Human-in-the-loop" (HITL) configurations. Since the execution logic resides on a server, organizations can implement gatekeepers that require manual approval before a "write" operation (like deleting a file or sending an email) is finalized.

Conversely, the open-source community and individual "power users" have gravitated toward Skills. The ability to version-control a folder of Markdown files and share them via GitHub allows for a modular, "Lego-like" approach to agent personality and capability. Developers of local-first AI applications have noted that Skills provide a more "human-centric" way to customize AI, as the instructions are readable and editable by anyone, not just those familiar with JSON-RPC or server administration.

Analysis of Implications: The Future of Agentic Workflows

The coexistence of MCP and Skills suggests a future where AI agents utilize a "tiered" capability system. In this likely evolution, MCP will serve as the "Long-Term Memory and External Hands" of the agent, providing a secure and standardized way to reach into company databases and external APIs. Skills will serve as the "Pre-frontal Cortex," providing the tactical guidance and stylistic preferences that define how the agent uses those hands.

The broader impact on the software industry is substantial. We are moving toward a "Protocol-First" development cycle. Instead of building a standalone app with a GUI, developers may find more value in building an MCP server that makes their service accessible to every LLM on the market. This shifts the competitive advantage from who has the best user interface to who provides the most "agent-friendly" data and tool access.

Furthermore, the rise of Skills may lead to a new category of "Prompt Engineers" who act more like "Behavioral Architects." These professionals will focus on writing robust, hallucination-resistant instruction sets that can be dropped into any agent’s skill folder to grant it instant expertise in legal drafting, medical coding, or specialized engineering tasks.

Conclusion and Strategic Outlook

As of mid-2025, the distinction between Model Context Protocol and AI Agent Skills remains a vital consideration for any organization deploying AI. MCP offers the robustness, security, and structured data access required for enterprise-grade applications, albeit at the cost of higher technical complexity and network latency. Skills offer the flexibility, speed, and ease of customization required for personal productivity and rapid prototyping, but they carry a higher risk of model misinterpretation and context exhaustion.

The successful AI deployments of the future will likely not choose one over the other. Instead, they will implement a hybrid architecture: using MCP for "heavy lifting" and data integrity, while utilizing Skills to provide the nuanced, localized guidance that allows an agent to truly understand the context of its mission. As these technologies mature, the goal remains the same—to transform LLMs from passive responders into proactive, capable partners in the digital workspace.